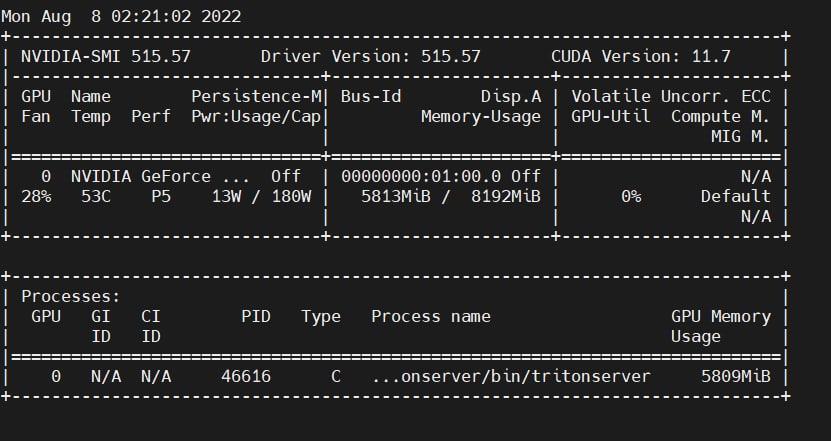

Hi, I am trying to run the tritonserver and flask proxy in the same container and found that the performance is bad.

Every request need 4 or more seconds. This is not acceptable...But I am usng a V100 gpu which I think is good enough. Hope someone can help me figure out the reason.

I0910 08:43:31.338765 258862 pinned_memory_manager.cc:240] Pinned memory pool is created at '0x7f5498000000' with size 268435456

I0910 08:43:31.339558 258862 cuda_memory_manager.cc:105] CUDA memory pool is created on device 0 with size 67108864

I0910 08:43:31.346725 258862 model_repository_manager.cc:1191] loading: fastertransformer:1

I0910 08:43:31.567535 258862 libfastertransformer.cc:1226] TRITONBACKEND_Initialize: fastertransformer

I0910 08:43:31.567573 258862 libfastertransformer.cc:1236] Triton TRITONBACKEND API version: 1.10

I0910 08:43:31.567581 258862 libfastertransformer.cc:1242] 'fastertransformer' TRITONBACKEND API version: 1.10

I0910 08:43:31.567638 258862 libfastertransformer.cc:1274] TRITONBACKEND_ModelInitialize: fastertransformer (version 1)

W0910 08:43:31.569452 258862 libfastertransformer.cc:149] model configuration:

{

"name": "fastertransformer",

"platform": "",

"backend": "fastertransformer",

"version_policy": {

"latest": {

"num_versions": 1

}

},

"max_batch_size": 1024,

"input": [

{

"name": "input_ids",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

-1

],

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": false

},

{

"name": "start_id",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "end_id",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "input_lengths",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": false

},

{

"name": "request_output_len",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

-1

],

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": false

},

{

"name": "runtime_top_k",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "runtime_top_p",

"data_type": "TYPE_FP32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "beam_search_diversity_rate",

"data_type": "TYPE_FP32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "temperature",

"data_type": "TYPE_FP32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "len_penalty",

"data_type": "TYPE_FP32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "repetition_penalty",

"data_type": "TYPE_FP32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "random_seed",

"data_type": "TYPE_INT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "is_return_log_probs",

"data_type": "TYPE_BOOL",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "beam_width",

"data_type": "TYPE_UINT32",

"format": "FORMAT_NONE",

"dims": [

1

],

"reshape": {

"shape": []

},

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "bad_words_list",

"data_type": "TYPE_INT32",

"format": "FORMAT_NONE",

"dims": [

2,

-1

],

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

},

{

"name": "stop_words_list",

"data_type": "TYPE_INT32",

"format": "FORMAT_NONE",

"dims": [

2,

-1

],

"is_shape_tensor": false,

"allow_ragged_batch": false,

"optional": true

}

],

"output": [

{

"name": "output_ids",

"data_type": "TYPE_UINT32",

"dims": [

-1,

-1

],

"label_filename": "",

"is_shape_tensor": false

},

{

"name": "sequence_length",

"data_type": "TYPE_UINT32",

"dims": [

-1

],

"label_filename": "",

"is_shape_tensor": false

},

{

"name": "cum_log_probs",

"data_type": "TYPE_FP32",

"dims": [

-1

],

"label_filename": "",

"is_shape_tensor": false

},

{

"name": "output_log_probs",

"data_type": "TYPE_FP32",

"dims": [

-1,

-1

],

"label_filename": "",

"is_shape_tensor": false

}

],

"batch_input": [],

"batch_output": [],

"optimization": {

"priority": "PRIORITY_DEFAULT",

"input_pinned_memory": {

"enable": true

},

"output_pinned_memory": {

"enable": true

},

"gather_kernel_buffer_threshold": 0,

"eager_batching": false

},

"instance_group": [

{

"name": "fastertransformer_0",

"kind": "KIND_CPU",

"count": 1,

"gpus": [],

"secondary_devices": [],

"profile": [],

"passive": false,

"host_policy": ""

}

],

"default_model_filename": "codegen-350M-multi",

"cc_model_filenames": {},

"metric_tags": {},

"parameters": {

"start_id": {

"string_value": "50256"

},

"model_name": {

"string_value": "codegen-350M-multi"

},

"is_half": {

"string_value": "1"

},

"enable_custom_all_reduce": {

"string_value": "0"

},

"vocab_size": {

"string_value": "51200"

},

"tensor_para_size": {

"string_value": "1"

},

"decoder_layers": {

"string_value": "20"

},

"size_per_head": {

"string_value": "64"

},

"max_seq_len": {

"string_value": "2048"

},

"end_id": {

"string_value": "50256"

},

"inter_size": {

"string_value": "4096"

},

"head_num": {

"string_value": "16"

},

"model_type": {

"string_value": "GPT-J"

},

"model_checkpoint_path": {

"string_value": "/model/fastertransformer/1/1-gpu"

},

"rotary_embedding": {

"string_value": "32"

},

"pipeline_para_size": {

"string_value": "1"

}

},

"model_warmup": []

}

I0910 08:43:31.569890 258862 libfastertransformer.cc:1320] TRITONBACKEND_ModelInstanceInitialize: fastertransformer_0 (device 0)

W0910 08:43:31.569915 258862 libfastertransformer.cc:453] Faster transformer model instance is created at GPU '0'

W0910 08:43:31.569922 258862 libfastertransformer.cc:459] Model name codegen-350M-multi

W0910 08:43:31.569940 258862 libfastertransformer.cc:578] Get input name: input_ids, type: TYPE_UINT32, shape: [-1]

W0910 08:43:31.569948 258862 libfastertransformer.cc:578] Get input name: start_id, type: TYPE_UINT32, shape: [1]

W0910 08:43:31.569954 258862 libfastertransformer.cc:578] Get input name: end_id, type: TYPE_UINT32, shape: [1]

W0910 08:43:31.569960 258862 libfastertransformer.cc:578] Get input name: input_lengths, type: TYPE_UINT32, shape: [1]

W0910 08:43:31.569966 258862 libfastertransformer.cc:578] Get input name: request_output_len, type: TYPE_UINT32, shape: [-1]

W0910 08:43:31.569972 258862 libfastertransformer.cc:578] Get input name: runtime_top_k, type: TYPE_UINT32, shape: [1]

W0910 08:43:31.569978 258862 libfastertransformer.cc:578] Get input name: runtime_top_p, type: TYPE_FP32, shape: [1]

W0910 08:43:31.569984 258862 libfastertransformer.cc:578] Get input name: beam_search_diversity_rate, type: TYPE_FP32, shape: [1]

W0910 08:43:31.569990 258862 libfastertransformer.cc:578] Get input name: temperature, type: TYPE_FP32, shape: [1]

W0910 08:43:31.569995 258862 libfastertransformer.cc:578] Get input name: len_penalty, type: TYPE_FP32, shape: [1]

W0910 08:43:31.570001 258862 libfastertransformer.cc:578] Get input name: repetition_penalty, type: TYPE_FP32, shape: [1]

W0910 08:43:31.570006 258862 libfastertransformer.cc:578] Get input name: random_seed, type: TYPE_INT32, shape: [1]

W0910 08:43:31.570012 258862 libfastertransformer.cc:578] Get input name: is_return_log_probs, type: TYPE_BOOL, shape: [1]

W0910 08:43:31.570018 258862 libfastertransformer.cc:578] Get input name: beam_width, type: TYPE_UINT32, shape: [1]

W0910 08:43:31.570024 258862 libfastertransformer.cc:578] Get input name: bad_words_list, type: TYPE_INT32, shape: [2, -1]

W0910 08:43:31.570034 258862 libfastertransformer.cc:578] Get input name: stop_words_list, type: TYPE_INT32, shape: [2, -1]

W0910 08:43:31.570046 258862 libfastertransformer.cc:620] Get output name: output_ids, type: TYPE_UINT32, shape: [-1, -1]

W0910 08:43:31.570053 258862 libfastertransformer.cc:620] Get output name: sequence_length, type: TYPE_UINT32, shape: [-1]

W0910 08:43:31.570059 258862 libfastertransformer.cc:620] Get output name: cum_log_probs, type: TYPE_FP32, shape: [-1]

W0910 08:43:31.570065 258862 libfastertransformer.cc:620] Get output name: output_log_probs, type: TYPE_FP32, shape: [-1, -1]

[FT][WARNING] Custom All Reduce only supports 8 Ranks currently. Using NCCL as Comm.

I0910 08:43:31.853587 258862 libfastertransformer.cc:307] Before Loading Model:

after allocation, free 31.19 GB total 31.75 GB

[WARNING] gemm_config.in is not found; using default GEMM algo

I0910 08:43:34.250280 258862 libfastertransformer.cc:321] After Loading Model:

after allocation, free 30.33 GB total 31.75 GB

I0910 08:43:34.251457 258862 libfastertransformer.cc:537] Model instance is created on GPU Tesla V100-PCIE-32GB

I0910 08:43:34.252189 258862 model_repository_manager.cc:1345] successfully loaded 'fastertransformer' version 1

I0910 08:43:34.252418 258862 server.cc:556]

+------------------+------+

| Repository Agent | Path |

+------------------+------+

+------------------+------+

I0910 08:43:34.252533 258862 server.cc:583]

+-------------------+--------------------------------------------+--------------------------------------------+

| Backend | Path | Config |

+-------------------+--------------------------------------------+--------------------------------------------+

| fastertransformer | /opt/tritonserver/backends/fastertransform | {"cmdline":{"auto-complete-config":"false" |

| | er/libtriton_fastertransformer.so | ,"min-compute-capability":"6.000000","back |

| | | end-directory":"/opt/tritonserver/backends |

| | | ","default-max-batch-size":"4"}} |

| | | |

+-------------------+--------------------------------------------+--------------------------------------------+

I0910 08:43:34.252618 258862 server.cc:626]

+-------------------+---------+--------+

| Model | Version | Status |

+-------------------+---------+--------+

| fastertransformer | 1 | READY |

+-------------------+---------+--------+

I0910 08:43:34.298144 258862 metrics.cc:650] Collecting metrics for GPU 0: Tesla V100-PCIE-32GB

I0910 08:43:34.298580 258862 tritonserver.cc:2159]

+----------------------------------+----------------------------------------------------------------------------+

| Option | Value |

+----------------------------------+----------------------------------------------------------------------------+

| server_id | triton |

| server_version | 2.23.0 |

| server_extensions | classification sequence model_repository model_repository(unload_dependent |

| | s) schedule_policy model_configuration system_shared_memory cuda_shared_me |

| | mory binary_tensor_data statistics trace |

| model_repository_path[0] | /openbayes/home/fauxpilot/models/codegen-350M-multi-1gpu |

| model_control_mode | MODE_NONE |

| strict_model_config | 1 |

| rate_limit | OFF |

| pinned_memory_pool_byte_size | 268435456 |

| cuda_memory_pool_byte_size{0} | 67108864 |

| response_cache_byte_size | 0 |

| min_supported_compute_capability | 6.0 |

| strict_readiness | 1 |

| exit_timeout | 30 |

+----------------------------------+----------------------------------------------------------------------------+

I0910 08:43:34.329038 258862 grpc_server.cc:4587] Started GRPCInferenceService at 0.0.0.0:8001

I0910 08:43:34.329462 258862 http_server.cc:3303] Started HTTPService at 0.0.0.0:8000

I0910 08:43:34.370706 258862 http_server.cc:178] Started Metrics Service at 0.0.0.0:8002