rayguan97 / ganav-offroad Goto Github PK

View Code? Open in Web Editor NEWThis is the code base for GANav: Group-wise Attention Network for Classifying Navigable Regions in Unstructured Outdoor Environments.

License: Apache License 2.0

This is the code base for GANav: Group-wise Attention Network for Classifying Navigable Regions in Unstructured Outdoor Environments.

License: Apache License 2.0

The documents test_ours.txt, train_ours.txt and val_ours.txt are required in the rugd_relabel4.py and rugd_relabel6.py. However, they don't exist in the RUGD Dataset which can be downloaded in http://rugd.vision/. Therefore, I want to sincerely ask how did you get these three documents.

I can find the "/convert_datasets" dir. But where could I find these six documents(in the official website of RUGD Dataset, I can only download the dirs "/RUGD_annotations" and "/RUGD_frames-with-annotations" but CAN NOT find the documents below):

│ │ │── test_ours.txt

│ │ │── test.txt

│ │ │── train_ours.txt

│ │ │── train.txt

│ │ │── val_ours.txt

│ │ │── val.txt

Do I need to make them by myself, or could you please release them.

Dear Author, is it suitable for RGD datasets in campus? if we apply GANav-offroad to our own Kinect RGBD Campus Dataset, what we need to do?

Can you please share checkpoint file (ganav_rugd.pth) for rugd6 of pre-trained model.

Hello.When I test on my own model trained by my dataset,there is a problem like that:test.py: error: the following arguments are required: checkpoint.What can I do for that? Thanks!

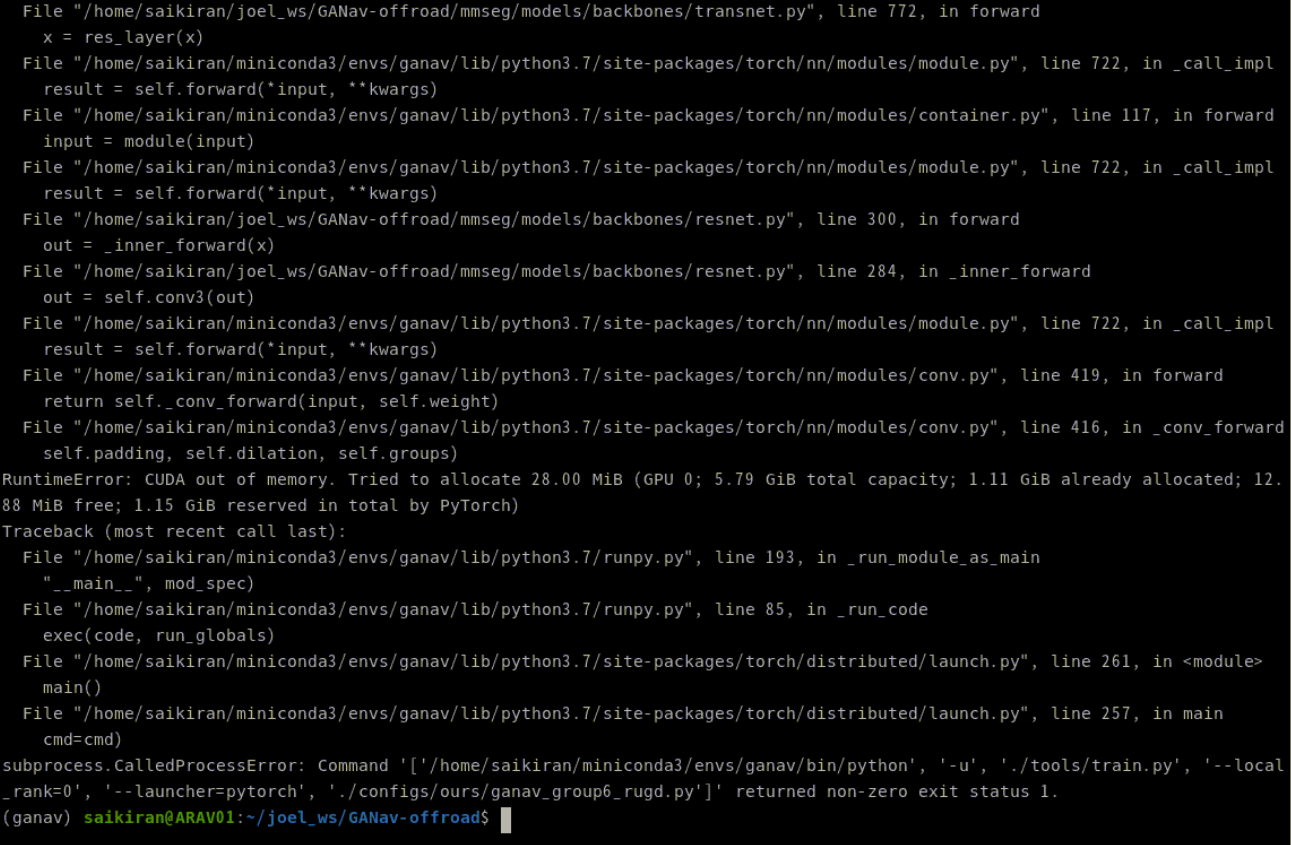

Hello Sir. Firstly, thanks for your contribution through this work.

I would to enquire about the GPU Requirements when training the GANav model, especially when using a single GPU. I am attempting to train the model using a single GPU (Nvida RTX 2060) but I am facing the error : Runtime Error: CUDA Out of Memory.

To be more specific, I am running the following code after setting up the GANav environment and processing the dataset as per readme instructions:

python -m torch.distributed.launch ./tools/train.py --launcher='pytorch' ./configs/ours/ganav_group6_rugd.py

and I am facing the error below:

PC specs and package versions & configuration used in environment for GANav:

GPU: Nvidia RTX 2060

CPU: AMD Ryzen 7 3700X

Python Version: 3.7.13

Pytorch version: 1.6.0

cudatoolkit version:10.1

mmcv-full version: 1.6.0

Dataset used: RUGD Dataset

No. of Annotation Groups: 6

Also, can you suggest some workarounds for memory management when training the model using a single GPU.

Thanks.

Hi, Thanks for this great work!

May I ask the which ROS version you used to test the algorithm?

I'm currently using Ubuntu 16.04 with ROS kinetic, but I got a bit confused whether to run the code in conda environment with python 3.7 or use python 2.7 which comes with ROS?

Hi,

Thanks for your work! I encountered an issue when running the command to train a model with your methods:

python ./tools/train.py ./configs/ours/ganav_group6_rugd.py

python ./tools/train.py ./configs/ours/ganav_group6_rellis.py

Both of the commands prompt this error:

I am still new to this topic, could you kindly let me know how to solve this problem?

Hi, thanks for this great work!

I met the AssertionError when I was trying to test my model:

2022-07-28 10:42:54,233 - mmseg - INFO - OpenCV num_threads is `<built-in function getNumThreads>

2022-07-28 10:42:54,233 - mmseg - INFO - Loaded 791 images

load checkpoint from local path: ./trained_models/unreal/iter_20000.pth

./tools/test.py:252: UserWarning: SyncBN is only supported with DDP. To be compatible with DP, we convert SyncBN to BN. Please use dist_train.sh which can avoid this error.

'SyncBN is only supported with DDP. To be compatible with DP, '

[ ] 0/791, elapsed: 0s, ETA:Traceback (most recent call last):

File "./tools/test.py", line 306, in <module>

main()

File "./tools/test.py", line 269, in main

format_args=eval_kwargs)

File "c:\ganav-offroad-new\mmseg\apis\test.py", line 91, in single_gpu_test

result = model(return_loss=False, **data)

File "C:\Anaconda\envs\ganav\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Anaconda\envs\ganav\lib\site-packages\mmcv\parallel\data_parallel.py", line 50, in forward

return super().forward(*inputs, **kwargs)

File "C:\Anaconda\envs\ganav\lib\site-packages\torch\nn\parallel\data_parallel.py", line 166, in forward

return self.module(*inputs[0], **kwargs[0])

File "C:\Anaconda\envs\ganav\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "C:\Anaconda\envs\ganav\lib\site-packages\mmcv\runner\fp16_utils.py", line 110, in new_func

return old_func(*args, **kwargs)

File "c:\ganav-offroad-new\mmseg\models\segmentors\base.py", line 110, in forward

return self.forward_test(img, img_metas, **kwargs)

File "c:\ganav-offroad-new\mmseg\models\segmentors\base.py", line 92, in forward_test

return self.simple_test(imgs[0], img_metas[0], **kwargs)

File "c:\ganav-offroad-new\mmseg\models\segmentors\encoder_decoder.py", line 264, in simple_test

seg_logit = self.inference(img, img_meta, rescale)

File "c:\ganav-offroad-new\mmseg\models\segmentors\encoder_decoder.py", line 247, in inference

seg_logit = self.whole_inference(img, img_meta, rescale)

File "c:\ganav-offroad-new\mmseg\models\segmentors\encoder_decoder.py", line 209, in whole_inference

seg_logit = self.encode_decode(img, img_meta)

File "c:\ganav-offroad-new\mmseg\models\segmentors\encoder_decoder.py", line 75, in encode_decode

out = self._decode_head_forward_test(x, img_metas)

File "c:\ganav-offroad-new\mmseg\models\segmentors\encoder_decoder.py", line 101, in _decode_head_forward_test

seg_logits= self.decode_head.forward_test(x, img_metas, self.test_cfg)

File "c:\ganav-offroad-new\mmseg\models\decode_heads\ours_head_class_attn.py", line 194, in forward_test

out, maps = self.forward(inputs)

File "c:\ganav-offroad-new\mmseg\models\decode_heads\ours_head_class_attn.py", line 138, in forward

out, attn = self.attn(x)

File "C:\Anaconda\envs\ganav\lib\site-packages\torch\nn\modules\module.py", line 1102, in _call_impl

return forward_call(*input, **kwargs)

File "c:\ganav-offroad-new\mmseg\models\backbones\transnet.py", line 179, in forward

assert h==self.h

AssertionError

I'm using the new code updated on 25th of July.

I think the error is the same as a previous issue #2, so I added this line of code in test=dict() in the config file:

dict(type='Pad', size=(300, 375), pad_val=0, seg_pad_val=255),

Then the error disappered, but I found that the result iamges were vertically compressed and has a black border at the bottom (compared with the original image):

I'm not sure if it's because the padding, cause when I tried to run the 'visulize.py' in the previous code and there is no black border of the tested image:

Even after installing mmcv-full with the 1.3.16 version the error persists.

I created the environment again, tried changing the version of the modules,but still the error is coming. Can u suggest a fix for the same.

(ganavsegmentation) C:\Users\HP\GANav-offroad>python ./tools/test.py ./trained_models/rugd_group6/ganav_rellis_6.py

C:\Users\HP\anaconda3\envs\ganavsegmentation\lib\site-packages\mmcv\cnn\bricks\transformer.py:28: UserWarning: Fail to import MultiScaleDeformableAttention from mmcv.ops.multi_scale_deform_attn, You should install mmcv-full if you need this module.

warnings.warn('Fail to import MultiScaleDeformableAttention from '

Traceback (most recent call last):

File "./tools/test.py", line 18, in

from mmseg.apis import multi_gpu_test, single_gpu_test

File "c:\users\hp\ganav-offroad\mmseg\apis_init_.py", line 2, in

from .inference import inference_segmentor, init_segmentor, show_result_pyplot

File "c:\users\hp\ganav-offroad\mmseg\apis\inference.py", line 9, in

from mmseg.models import build_segmentor

File "c:\users\hp\ganav-offroad\mmseg\models_init_.py", line 5, in

from .decode_heads import * # noqa: F401,F403

File "c:\users\hp\ganav-offroad\mmseg\models\decode_heads_init_.py", line 1, in

from .fcn_head import FCNHead

File "c:\users\hp\ganav-offroad\mmseg\models\decode_heads\fcn_head.py", line 7, in

from .decode_head import BaseDecodeHead

File "c:\users\hp\ganav-offroad\mmseg\models\decode_heads\decode_head.py", line 11, in

from ..losses import accuracy

File "c:\users\hp\ganav-offroad\mmseg\models\losses_init_.py", line 6, in

from .focal_loss import FocalLoss

File "c:\users\hp\ganav-offroad\mmseg\models\losses\focal_loss.py", line 6, in

from mmcv.ops import sigmoid_focal_loss as sigmoid_focal_loss

File "C:\Users\HP\anaconda3\envs\ganavsegmentation\lib\site-packages\mmcv\ops_init.py", line 2, in

from .assign_score_withk import assign_score_withk

File "C:\Users\HP\anaconda3\envs\ganavsegmentation\lib\site-packages\mmcv\ops\assign_score_withk.py", line 6, in

'ext', ['assign_score_withk_forward', 'assign_score_withk_backward'])

File "C:\Users\HP\anaconda3\envs\ganavsegmentation\lib\site-packages\mmcv\utils\ext_loader.py", line 13, in load_ext

ext = importlib.import_module('mmcv.' + name)

File "C:\Users\HP\anaconda3\envs\ganavsegmentation\lib\importlib_init.py", line 127, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

ModuleNotFoundError: No module named 'mmcv._ext'

Hi, dou you know any other similar campus dataset with lidar and camera like your sensors setup? will you open source a campus

dataset of ground UGV? I find this kind of data set too few.

In my opinion, the structure of RELLIS-3D has changed a lot. Maybe you could have a look and change ur code. many thx!

Hello, thanks for sharing your work and code!

I'd like to obtain the result 71.55% (ResNet50-backbone) on RUGD dataset which is the baseline of yours.

Then, should I replace 'TransNet' with 'ResNetV1c' in line 7 of 'ours_att'??

Thanks!

Hi, thanks for your patience! I have a question about the pre-processing part.

I have a small dataset which is Iabeled with 6 class (same with this project), but the processed images looked like this:

With the original ground truth:

Here the background is white which is different from the processed images of the RUGD dataset, so I'm wondering if I made something wrong during the process.

Here is the code I changed from the 'rugd_relabel6.py' :

Hello,

Thanks for sharing your work and code!

When I wanted to test and display the model's operation, I got the following results. When I experimented with different images, the result did not change. I could not provide the desired image from the dataset you provided.

Models I have used: ganav_rugd.pth and ganav_rugd_6.pth

I used these two models as you have given. I did not do the model training at this stage.

What would you recommend regarding the results?

I am Vansin, the technical operator of OpenMMLab. In September of last year, we announced the release of OpenMMLab 2.0 at the World Artificial Intelligence Conference in Shanghai. We invite you to upgrade your algorithm library to OpenMMLab 2.0 using MMEngine, which can be used for both research and commercial purposes. If you have any questions, please feel free to join us on the OpenMMLab Discord at https://discord.gg/amFNsyUBvm or add me on WeChat (van-sin) and I will invite you to the OpenMMLab WeChat group.

Here are the OpenMMLab 2.0 repos branches:

| OpenMMLab 1.0 branch | OpenMMLab 2.0 branch | |

|---|---|---|

| MMEngine | 0.x | |

| MMCV | 1.x | 2.x |

| MMDetection | 0.x 、1.x、2.x | 3.x |

| MMAction2 | 0.x | 1.x |

| MMClassification | 0.x | 1.x |

| MMSegmentation | 0.x | 1.x |

| MMDetection3D | 0.x | 1.x |

| MMEditing | 0.x | 1.x |

| MMPose | 0.x | 1.x |

| MMDeploy | 0.x | 1.x |

| MMTracking | 0.x | 1.x |

| MMOCR | 0.x | 1.x |

| MMRazor | 0.x | 1.x |

| MMSelfSup | 0.x | 1.x |

| MMRotate | 1.x | 1.x |

| MMYOLO | 0.x |

Attention: please create a new virtual environment for OpenMMLab 2.0.

Hello, do you have the ros-supported version?

Is it possible to use a backbone model that is not listed in the directory. When I tried to implement it its telling its not present in the registry. I even tried adding it to the registry also still its not working out. What to do?

Hi! Firstly, thank you for sharing.

I converted labels of Rugd and Rellis Dataset to RUGD6 group (ID) format. Then, I trained using rugd6 group configs.

When I combine the two datasets, the success rate I get is lower than the success you have published for individual datasets. Have you tried this too? What could be the potential reasons for this?

I tried same configuration in the repo for training. I did not change anything.

Regards,

Hi, thanks again for this great work!

May I ask how do you generate the checkpoint file (.pth)? I'm trying to train the model with my own dataset, the training process went well. But in the testing process, I'm confused about whether to use the pre-trained model you provided or if I need to generate my own checkpoint file?

Thank you!

I am trying to run the file pytorch2onnx.py using the pretrained model from https://drive.google.com/drive/folders/1Un-s7S3WjNTLjkhXPnOAK4RoUjL-pibk

Though the test.py runs ,pytorch2onnx gives a mismatch errror between the model and the loaded state dict.Can you please help me to resolve this issue?Am I using the proper config?

Hello, I want to know how to visualize the results as the video you provide?

how to get segmentation cost map from segmentation results?I'm rather confused about this part, can you provide the code for this part?

Hello Sir. I am opening this issue as a continutation of Issue #12 . Thank you once again sir for your suggestions and ideas in resolving the issues that I faced in training the GANav model.

As per your suggestions in issue #12 towards improving the evaluation metrics for the trained model, a gpu with higher RAM (Nvidia GeForce RTX 2080 8GB) was installed for training the model, and this solution greatly improved the performance of the trained model.

Initially, the sample_per_gpu parameter was set back to 4, but then, when training the model, the error associated 'CUDA out of Memory' came up.

It was when samples_per_gpu=3, the training phase commenced successfully.

The evaluation metrics from the first checkpoint showed promising results (as shown below), with values being close to the performance metrics from the testing phase conducted for the trained model that was uploaded in the readme of this Repository

However, as the training phase progressed, the later checkpoints had slightly degraded performance metrics compared to the 1st checkpoint, and the metrics were fluctuating about a certain level, with no noticeable improvement throughout the training phase. The trained model performance at final checkpoint is given below:

A similar performance rating was observed as well in the testing phase (for the final trained model) compared to the final checkpoint results in the training phase:

From the above observations, the following doubts came to mind which I would like to ask:

1.) Is it natural for the degradation in performance to take place in the course of training the model? Is there any way improve the effectiveness of the training phase in boosting the performance metrics

2.) From our previous discussion, I believe that you had used the same GPU that I am using currently, in training the model. Also, from the code in the repository, I presume that you were using 4 samples per gpu in training the model (please correct me if I'm wrong). I was wondering why I was limited to using 3 samples per gpu, even while using the same GPU, and the error solely being related to GPU memory capacity.

3.) Finally, I would like to enquire about the configuration that was used in training the model that was uploaded in the readme of this Repository, specifically whether SyncBN and Distributed training (with multiple GPUs) was used or BN and Single GPUs was used. Since I am benchmarking the training results with that of the uploaded trained model, I am curious whether the improved performance for the uploaded trained model was due to Distributed training, or just from the increased samples per gpu.

In the paper, RUGD 6 groups consist of 'Smooth Region', 'Rough Region', 'Bumpy Region', 'Forbidden Region', 'Obstacle', 'Background'.

When I run the test code, the output has shown as follows: Background, L1, L2, L3, non_Nav, obstacle.

Could you please clarify which classes fall into which class described in the paper?

Thanks for this source code! We recently open sourced first parts of the GOOSE dataset and I trained GANav on the same.

The qualitative results look quite promising but the numbers appear quite low compared to your results on RUGD and Rellis3D. Of course this is difficult to compare as no SOTA mIOU is established yet.

+-------+-------+-------+

| aAcc | mIoU | mAcc |

+-------+-------+-------+

| 57.27 | 36.64 | 51.84 |

+-------+-------+-------+

+---------------------+-------+-------+

| Class | IoU | Acc |

+---------------------+-------+-------+

| background/obstacle | 71.94 | 73.34 |

| stable | 34.7 | 85.21 |

| granular | 15.96 | 23.93 |

| poor foothold | 21.94 | 32.33 |

| high resistance | 17.66 | 21.3 |

| none | 57.67 | 74.96 |

+---------------------+-------+-------+

I took the 6-class approach, with the following categorization and otherwise default parameters:

# 0 background: sky

# 1 Stable: bikeway, pedesstrian_crossing, road_marking, sidewalk, asphalt,

# 2 Granular: cobble, leaves, moss, gravel, soil

# 3 Poor foothold: snow, low_grass,

# 4 High resistance: high_grass, bush, debris, crops, water, tree_root

# 5 Obstacle: everything else

Do you have any tips for fine-tuning the training? If of interest, I could also add a PR with the config for GOOSE.

Hey all of the setups went well when I followed the steps for the rellis data set.

I created the environment and was able to run the data processing step successfully. however, I was getting an error while running the training step for rellis which is

training step:

python ./tools/train.py ./configs/ours/ganav_group6_rellis.py

Are you familiar with the initialization of _get_default_group and do you know how to resolve this

hi,nice work. But I meet some problems.

When I use the default config file and train the network on resllis3D, I found the loss became nan during training.

Has anyone else had a similar problem?

The first few records of the training are shown below:

2022-07-26 11:14:00,126 - mmseg - INFO - Iter [50/160000] lr: 9.997e-03, eta: 11:13:45, time: 0.253, data_time: 0.010, memory: 17373, decode.loss_seg: 10.1304, decode.acc_seg: 21.6483, aux.loss_seg: 0.6783, aux.acc_seg: 21.7035, loss: 10.8088

2022-07-26 11:14:07,372 - mmseg - INFO - Iter [100/160000] lr: 9.994e-03, eta: 8:49:53, time: 0.145, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 23.8205, aux.loss_seg: nan, aux.acc_seg: 31.7386, loss: nan

2022-07-26 11:14:14,590 - mmseg - INFO - Iter [150/160000] lr: 9.992e-03, eta: 8:01:19, time: 0.144, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 24.1812, aux.loss_seg: nan, aux.acc_seg: 24.1812, loss: nan

2022-07-26 11:14:21,808 - mmseg - INFO - Iter [200/160000] lr: 9.989e-03, eta: 7:37:00, time: 0.144, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 28.6286, aux.loss_seg: nan, aux.acc_seg: 28.6286, loss: nan

2022-07-26 11:14:29,039 - mmseg - INFO - Iter [250/160000] lr: 9.986e-03, eta: 7:22:29, time: 0.145, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 24.6320, aux.loss_seg: nan, aux.acc_seg: 24.6320, loss: nan

2022-07-26 11:14:36,279 - mmseg - INFO - Iter [300/160000] lr: 9.983e-03, eta: 7:12:52, time: 0.145, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 22.7228, aux.loss_seg: nan, aux.acc_seg: 22.7228, loss: nan

2022-07-26 11:14:43,525 - mmseg - INFO - Iter [350/160000] lr: 9.981e-03, eta: 7:05:59, time: 0.145, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 24.7156, aux.loss_seg: nan, aux.acc_seg: 24.7156, loss: nan

2022-07-26 11:14:50,798 - mmseg - INFO - Iter [400/160000] lr: 9.978e-03, eta: 7:00:59, time: 0.145, data_time: 0.001, memory: 17373, decode.loss_seg: nan, decode.acc_seg: 25.7715, aux.loss_seg: nan, aux.acc_seg: 25.7715, loss: nan

Hey, thanks for sharing the work!

I was wondering the expected usage / instruction of this for the users.

This seems to be working but the results are not that satisfying.

So I was wondering,

This is the experimental image, that I ran RUGD6 model on my camera data that I acquired.

FYI, I am planning to utilize this for terrain segmentation for a buggy car.

My hardware setup is not identical to yours, mine is parallel to the ground, 30cm above the ground, not inclined toward the ground.

Thanks in advance!

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.