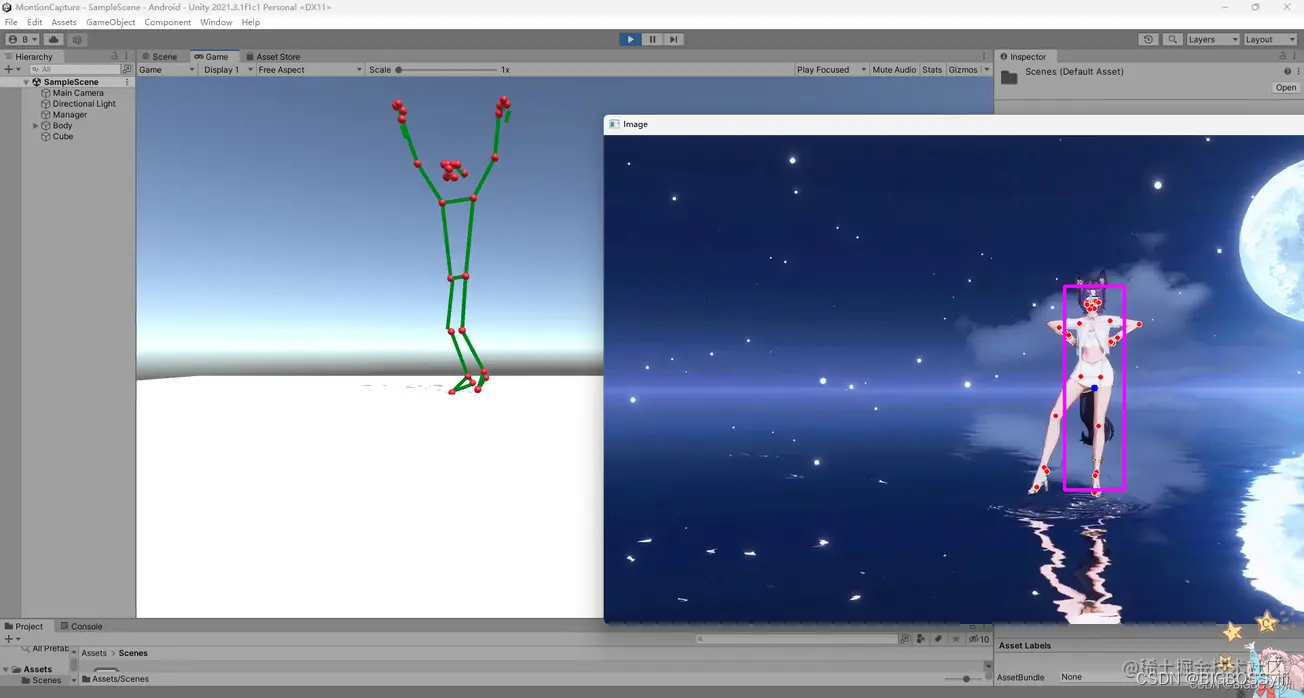

This article will introduce how to use Python with OpenCV image capture, with powerful Mediapipe library to achieve ** * human motion detection ** and recognition; The recognition results are synchronized to Unity** * in real time to realize the recognition of the character model's moving body structure in Unity

Demo:https://hackathon2022.juejin.cn/#/works/detail?unique=WJoYomLPg0JOYs8GazDVrw

CSDN: https://blog.csdn.net/weixin_50679163?type=edu

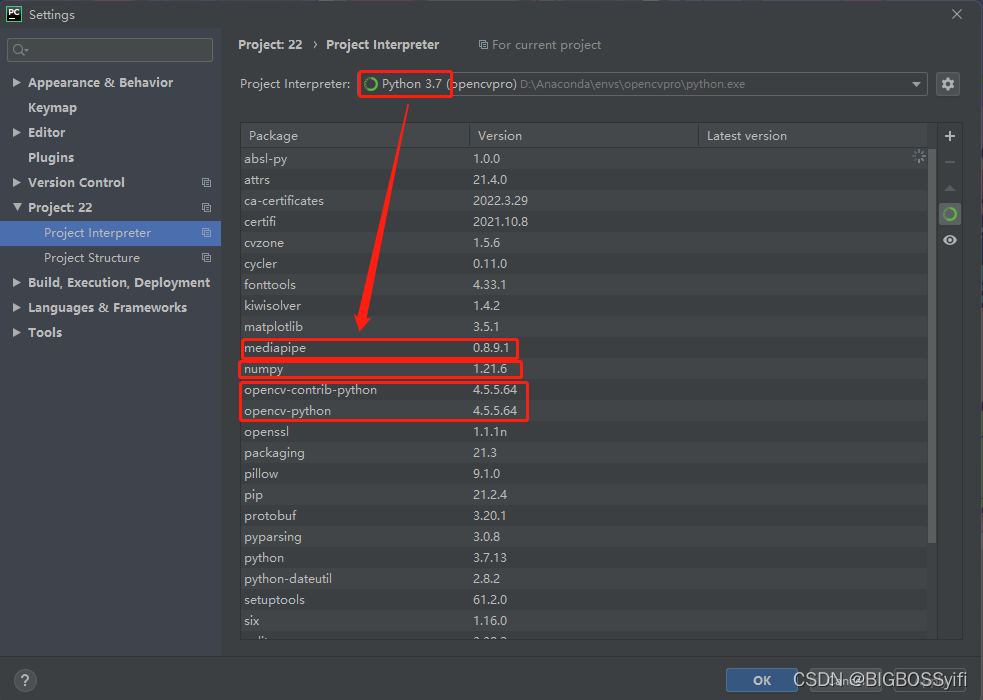

Python 3.7

Mediapipe 0.8.9.1

Numpy 1.21.6

OpenCV-Python 4.5.5.64

OpenCV-contrib-Python 4.5.5.64

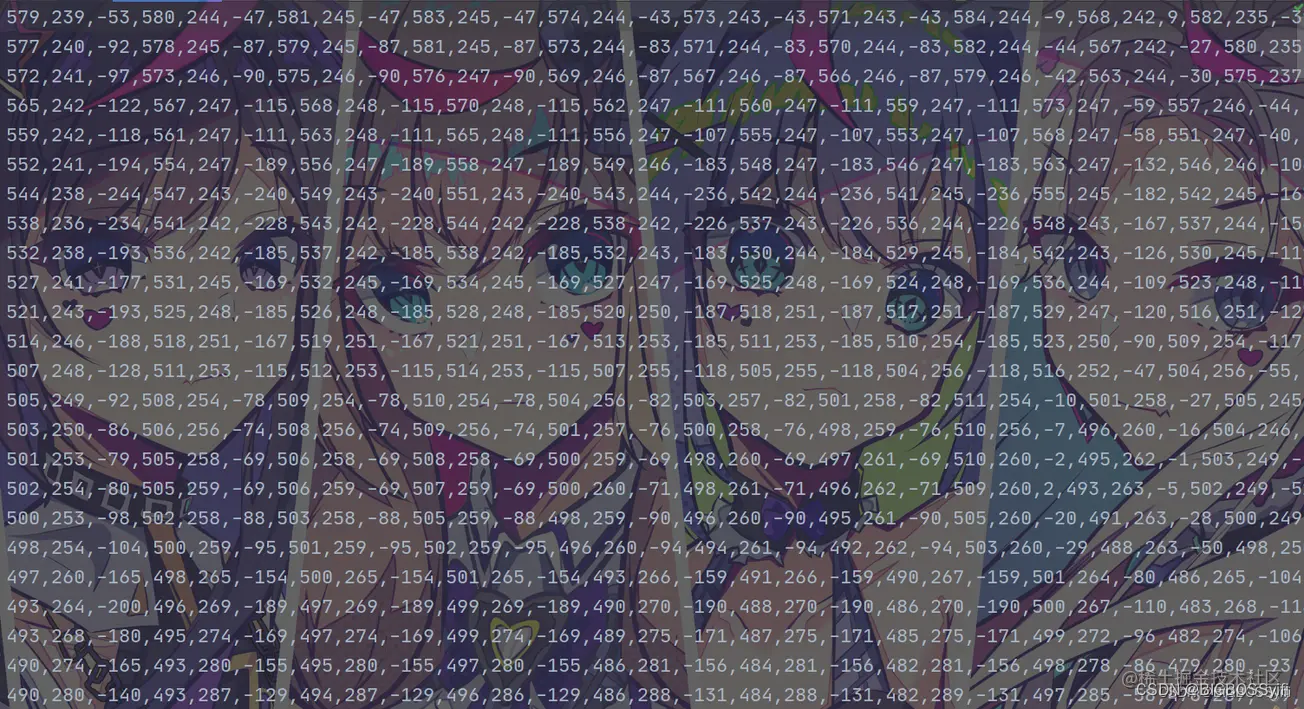

** Body data file **

This part is for us to save the data by reading the characters in the video and calculating the information of each feature point. This information is very important, and then import these action data in untiy

** Camera capture part: **

import cv2

cap = cv2.VideoCapture(0) #OpenCV摄像头调用:0=内置摄像头(笔记本) 1=USB摄像头-1 2=USB摄像头-2

while True:

success, img = cap.read()

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) #cv2图像初始化

cv2.imshow("HandsImage", img) #CV2窗体

cv2.waitKey(1) #关闭窗体FPS

import time

#帧率时间计算

pTime = 0

cTime = 0

while True

cTime = time.time()

fps = 1 / (cTime - pTime)

pTime = cTime

cv2.putText(img, str(int(fps)), (10, 70), cv2.FONT_HERSHEY_PLAIN, 3,

(255, 0, 255), 3) #FPS的字号,颜色等设置Body motion capture:

while True:

if bboxInfo:

lmString = ''

for lm in lmList:

lmString += f'{lm[1]},{img.shape[0] - lm[2]},{lm[3]},'

posList.append(lmString)import cv2

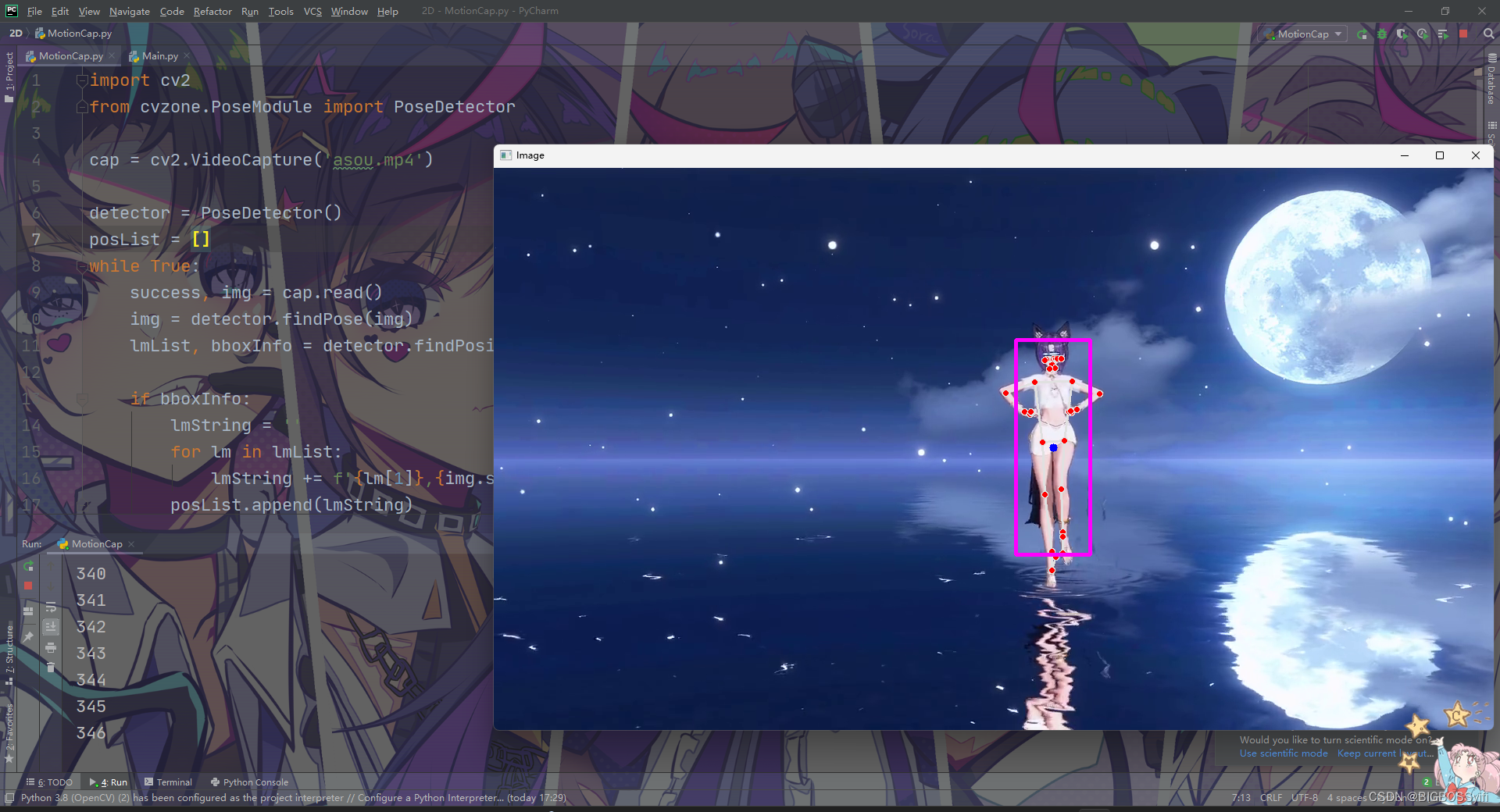

from cvzone.PoseModule import PoseDetector

# 读取cv来源,也可以调用摄像头使用

cap = cv2.VideoCapture('asoul.mp4')

detector = PoseDetector()

posList = []

while True:

success, img = cap.read()

img = detector.findPose(img)

lmList, bboxInfo = detector.findPosition(img)

if bboxInfo:

lmString = ''

for lm in lmList:

lmString += f'{lm[1]},{img.shape[0] - lm[2]},{lm[3]},'

posList.append(lmString)

cv2.imshow("Image", img)

key = cv2.waitKey(1)

if key == ord('s'):

with open("MotionFile.txt", 'w') as f: # 将动作数据保存下来

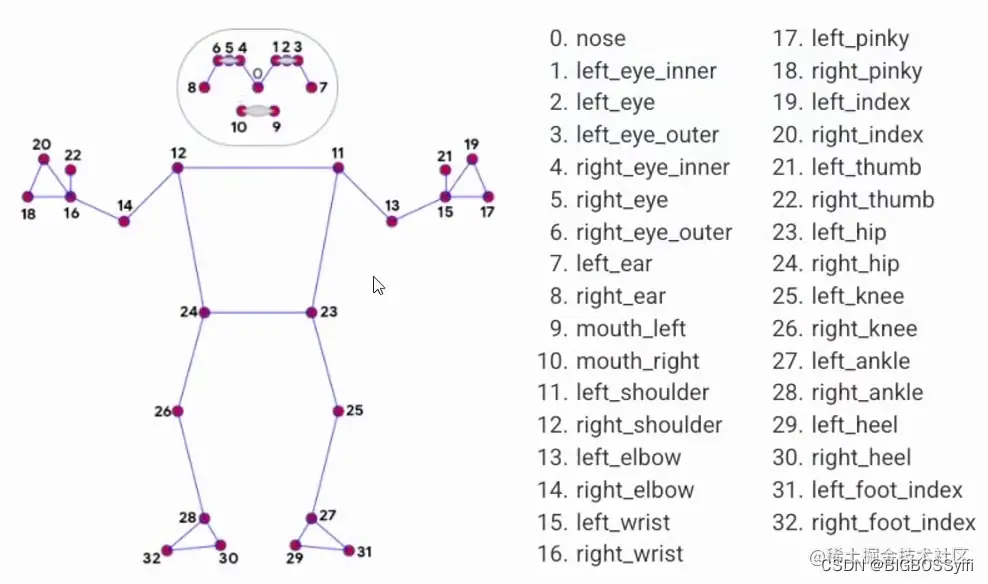

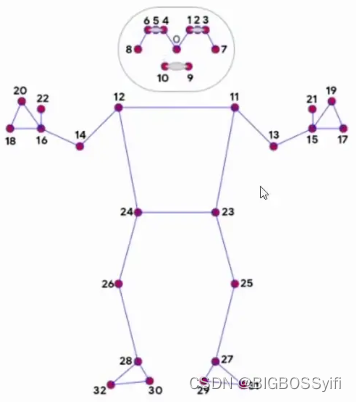

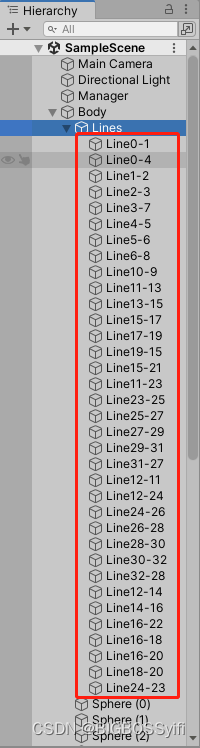

f.writelines(["%s\n" % item for item in posList])In Unity, we need to build a model of the character, here we need a 33 Sphere for the ** body feature points ** and 33 Cube for the middle stand

Here is the cs file corresponding to each Line to achieve the functions: ** Connect the feature points and the Line together **

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class LineCode : MonoBehaviour

{

LineRenderer lineRenderer;

public Transform origin;

public Transform destination;

void Start()

{

lineRenderer = GetComponent<LineRenderer>();

lineRenderer.startWidth = 0.1f;

lineRenderer.endWidth = 0.1f;

}

// 连接两个点

void Update()

{

lineRenderer.SetPosition(0, origin.position);

lineRenderer.SetPosition(1, destination.position);

}

}Here is ** read the character action data identified above and saved **, ** and loop each sub-data to each Sphere point ** so that the feature points move with the character action in the video

using System.Collections;

using System.Collections.Generic;

using System.Linq;

using UnityEngine;

using System.Threading;

public class AnimationCode : MonoBehaviour

{

public GameObject[] Body;

List<string> lines;

int counter = 0;

void Start()

{

// 读取MotionFile.txt的动作数据文件

lines = System.IO.File.ReadLines("Assets/MotionFile.txt").ToList();

}

void Update()

{

string[] points = lines[counter].Split(',');

// 循环遍历到每一个Sphere点

for (int i =0; i<=32;i++)

{

float x = float.Parse(points[0 + (i * 3)]) / 100;

float y = float.Parse(points[1 + (i * 3)]) / 100;

float z = float.Parse(points[2 + (i * 3)]) / 300;

Body[i].transform.localPosition = new Vector3(x, y, z);

}

counter += 1;

if (counter == lines.Count) { counter = 0; }

Thread.Sleep(30);

}

}