This repository contains Keras implementations of the architectures listed below. For a quick theoretical intro about Deep Learning for NLP, I encourage you to have a look at my notes.

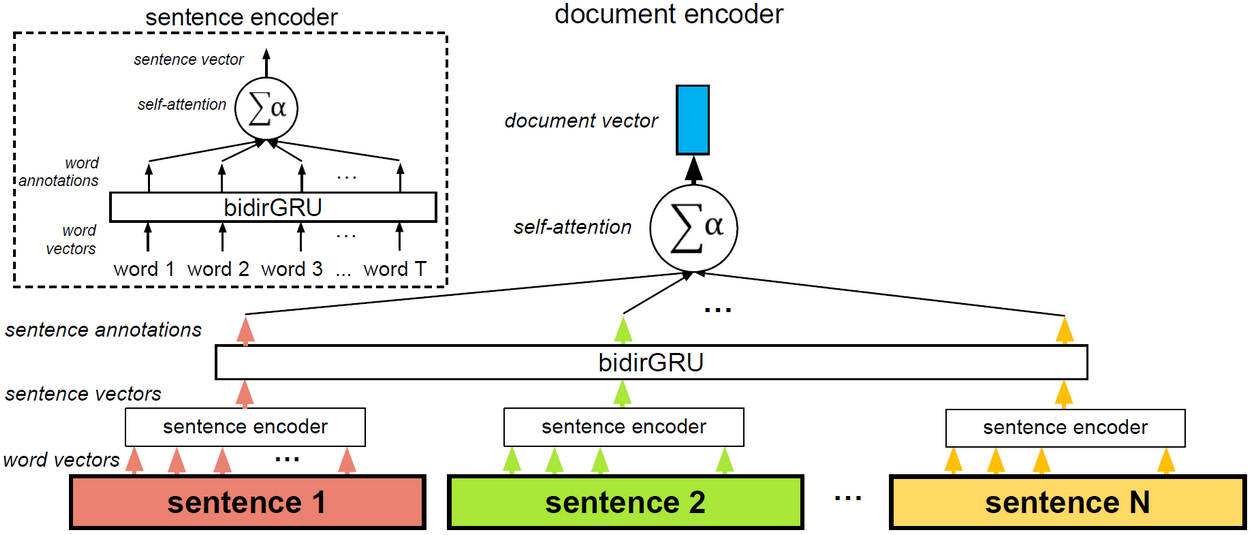

A RAM-friendly implementation of the model introduced by Yang et al. (2016), with step-by-step explanations and links to relevant resources: https://github.com/Tixierae/deep_learning_NLP/blob/master/HAN/HAN_final.ipynb

In my experiments on the Amazon review dataset (3,650,000 documents, 5 classes), I reach 62.6% accuracy after 8 epochs, and 63.6% accuracy (the accuracy reported in the paper) after 42 epochs. Each epoch takes about 20 mins on my TitanX GPU. I deployed the model as a web app. As shown in the image below, you can paste your own review and visualize how the model pays attention to words and sentences.

The notebook makes use of the following concepts:

- batch training. Batches are loaded from disk and passed to the model one by one with a generator. This way, it's possible to train on datasets that are too big to fit on RAM.

- bucketing. To have batches that are as dense as possible and make the most of each tensor product, the batches contain documents of similar sizes.

- cyclical learning rate and cyclical momentum schedules, as in Smith (2017) and Smith (2018). The cyclical learning rate schedule is a new, promising approach to optimization in which the learning rate increases and decreases in a pre-defined interval rather than keeping decreasing. It worked better than Adam and SGD alone for me1.

- self-attention (aka inner attention). We use the formulation of the original paper.

- bidirectional RNN

- Gated Recurrent Unit (GRU)

1There is more and more evidence that adaptive optimizers like Adam, Adagrad, etc. converge faster but generalize poorly compared to SGD-based approaches. For example: Wilson et al. (2018), this blogpost. Traditional SGD is very slow, but a cyclical learning rate schedule can bring a significant speedup, and even sometimes allow to reach better performance.

An implementation of (Kim 2014)'s 1D Convolutional Neural Network for short text classification: https://github.com/Tixierae/deep_learning_NLP/blob/master/cnn_imdb.ipynb

Agreed, this is not for NLP. But an implementation can be found here https://github.com/Tixierae/deep_learning_NLP/blob/master/mnist_cnn.py. I reach 99.45% accuracy on MNIST with it.

If you use some of the code in this repository, please cite

@article{tixier2018notes,

title={Notes on Deep Learning for NLP},

author={Tixier, Antoine J.-P.},

journal={arXiv preprint arXiv:1808.09772},

year={2018}

}