Issue

There are strange things going on in dataset_synthesis/nyu_uw_syn_script.py. If this is the code that was used to generate Figure 4 in the paper and train the model, as appears to be the case after running it, then the results are not valid.

- Non-standard use of the values N_lambda

- Random number ranges differ from those in citation

I believe (2) is an attempt to correct for (1). Regardless, the result is that the closer regions of the images are water-colored (blue or green), while the farther regions are air-colored, which is non-physical and the opposite of the "fog" effect that this model should generate. See examples below.

Probable Root Cause

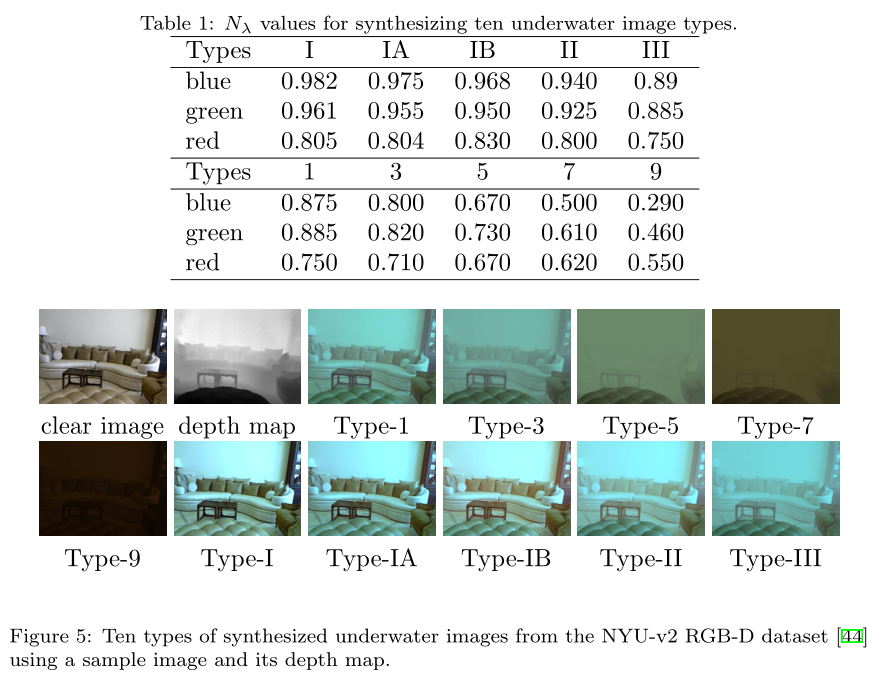

The dataset synthesis is based on this project, cited through Deep Underwater Image Enhancement ([3] in the paper). The relevant "magic numbers" N_lambda (Table I in DUIE) come from a textbook, Hydrologic Optics (1975), which I was not able to find online. No code is available through either cite for the version of the well-studied atmospheric fog model that uses this particular N_lambda parameterization, and the papers do not provide quite enough information to reproduce it due to an ambiguity in going from N_lambda(d(x)) (Eq. 2 in both DUIE and All-In-One) to N_lambda as a scalar value (Table 1 in DUIE).

nyu_uw_syn_script.py has T(x) = N_lambda * d(x) (after appropriate scaling). Not being able to find the 1975 book, I turned to a newer paper, Domain Adaptive Adversarial Learning Based on Physics Model Feedback for Underwater Image Enhancement, which fully specifies the model, including the random ranges (Table I) and has T(x) = N_lambda ^ d(x) (Eq. 2). I'd guess that the existing formula in nyu_uw_syn_script.py came from accidentally omitting the parentheses while copying Eq. 2 from DUIE.

Fix

For a quick fix, replace lines 106-109 in the script

T_x = np.ndarray((480, 640, 3))

T_x[:,:,0] = N_lambda[water_type][2] * depth

T_x[:,:,1] = N_lambda[water_type][1] * depth

T_x[:,:,2] = N_lambda[water_type][0] * depth

which is equivalent to

T_x = np.flip(N_lambda[water_type])[None, None, :] * depth[:, :, None]

with:

T_x = np.flip(N_lambda[water_type])[None, None, :] ** depth[:, :, None]

this does not replicate DUIE due to differences in the random ranges, but it produces acceptable results.

The parameters could be further tweaked to improve quality, but I would advise other researchers to do one of the following:

- Write your own dataset synthesis code following Domain Adaptive Adversarial Learning Based on Physics Model Feedback for Underwater Image Enhancement

- Use the pre-generated NYUv2-Jerlov dataset cited above at https://li-chongyi.github.io/proj_underwater_image_synthesis.html

There's a lot of other excellent stuff in this repo, but this was definitely a stumbling block for my work, so I'd welcome input from the authors or other users on how this affects the results! I decided that the 2 options I presented were higher-value than submitting a PR to fix this code, though someone else certainly could write one up for the sake of replicability.