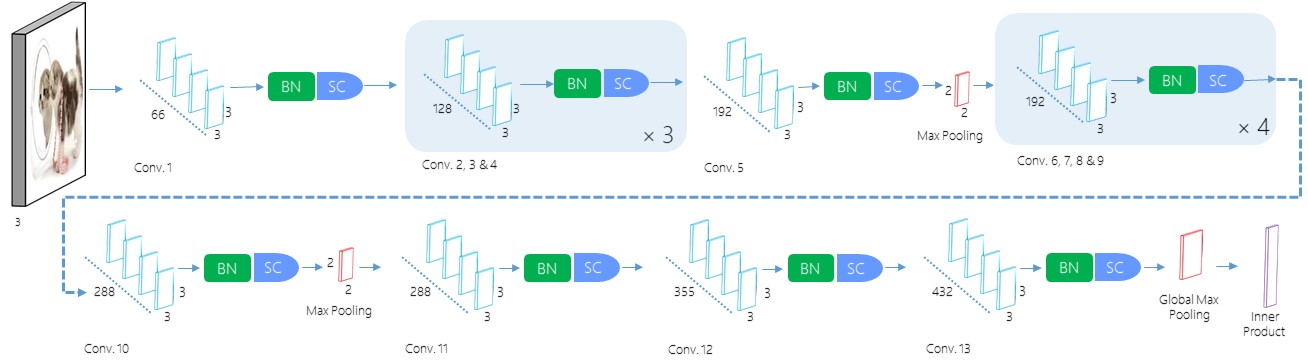

This is a repository for translating SimpNet in an R flavored Keras implementation. SimpNet is a deep convolutional neural network architecture reported on in:

Towards Principled Design of Deep Convolutional Networks: Introducing SimpNet

Seyyed Hossein Hasanpour, Mohammad Rouhani, Mohsen Fayyaz, Mohammad Sabokrou and Ehsan Adeli

arXiv pre-print: Hasanpour et al. 2018 on arXiv

This is a link to the original SimpNet Github repository

From the author's abstract:

We empirically show that SimpNet provides a good trade-off between the computation/memory efficiency and the accuracy solely based on these primitive but crucial principles. SimpNet outperforms the deeper and more complex architectures such as VGGNet, ResNet, WideResidualNet \etc, on several well-known benchmarks, while having 2 to 25 times fewer number of parameters and operations. We obtain state-of-the-art results (in terms of a balance between the accuracy and the number of involved parameters) on standard data sets, such as CIFAR10, CIFAR100, MNIST and SVHN.

This repo is an attempt to translate the SimpNet architecture into the Keras API via R and the Rstudio flavor of Keras.

So far I have translated:

* SimpNetV2 for MNIST

* MNIST_SimpleNet_GP_13L_drpall_5Mil_66_maxdrp

* SimpNetV2 for CIFAR10

* CIFAR10_SimpleNet_GP_13L_drpall_8Mil_66_DRP_After_Pooling

For the original results of SimpNet in its native Caffe implementation, see the article and GH repo. The results below are my translation and may not be fully reflective of the native implementation. I will keep trying to match the published results.

| Epochs: | 10 |

|---|---|

| Test Loss: | 0.01990014 |

| Test Accuracy: | 0.9949 |

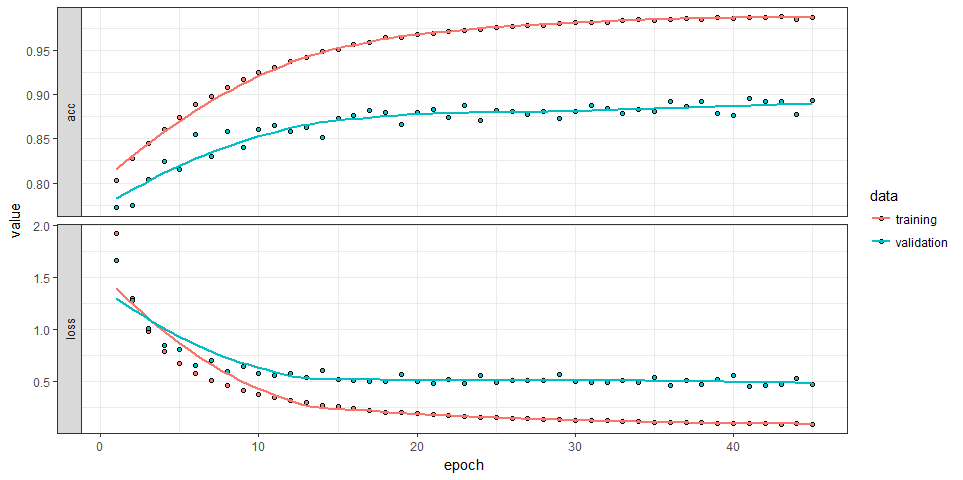

CIFAR10 results by Hasanpour et al. 2018 are 95.89, I will continue to work on this one.

| Epochs: | 50 |

|---|---|

| Test Loss: | 0.5701714 |

| Test Accuracy: | 0.887 |