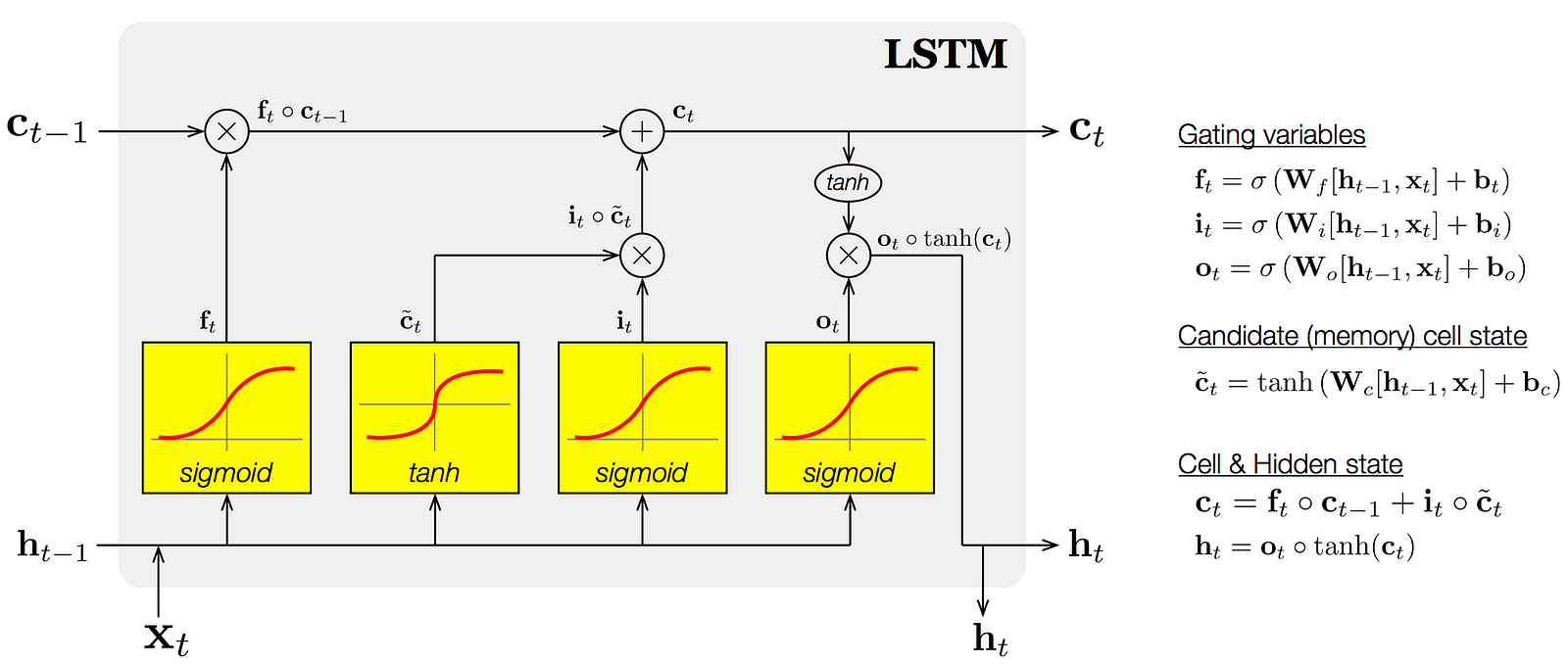

Long short-term memory (LSTM) unit is a building unit for layers of a recurrent neural network (RNN). A RNN composed of LSTM units is often called an LSTM network. A common LSTM unit is composed of a cell, an input gate, an output gate and a forget gate. The cell is responsible for "remembering" values over arbitrary time intervals; hence the word "memory" in LSTM. Each of the three gates can be thought of as a "conventional" artificial neuron, as in a multi-layer (or feedforward) neural network.

An LSTM is well-suited to classify, process and predict time series given time lags of unknown size and duration between important events. LSTMs were developed to deal with the exploding and vanishing gradient problem when training traditional RNNs.

-

Forget Gate “f” ( a neural network with sigmoid)

-

Candidate layer “C"(a NN with Tanh)

-

Input Gate “I” ( a NN with sigmoid )

-

Output Gate “O”( a NN with sigmoid)

-

Hidden state “H” ( a vector )

-

Memory state “C” ( a vector)

-

Inputs to the LSTM cell at any step are Xt (current input) , Ht-1 (previous hidden state ) and Ct-1 (previous memory state).

-

Outputs from the LSTM cell are Ht (current hidden state ) and Ct (current memory state)