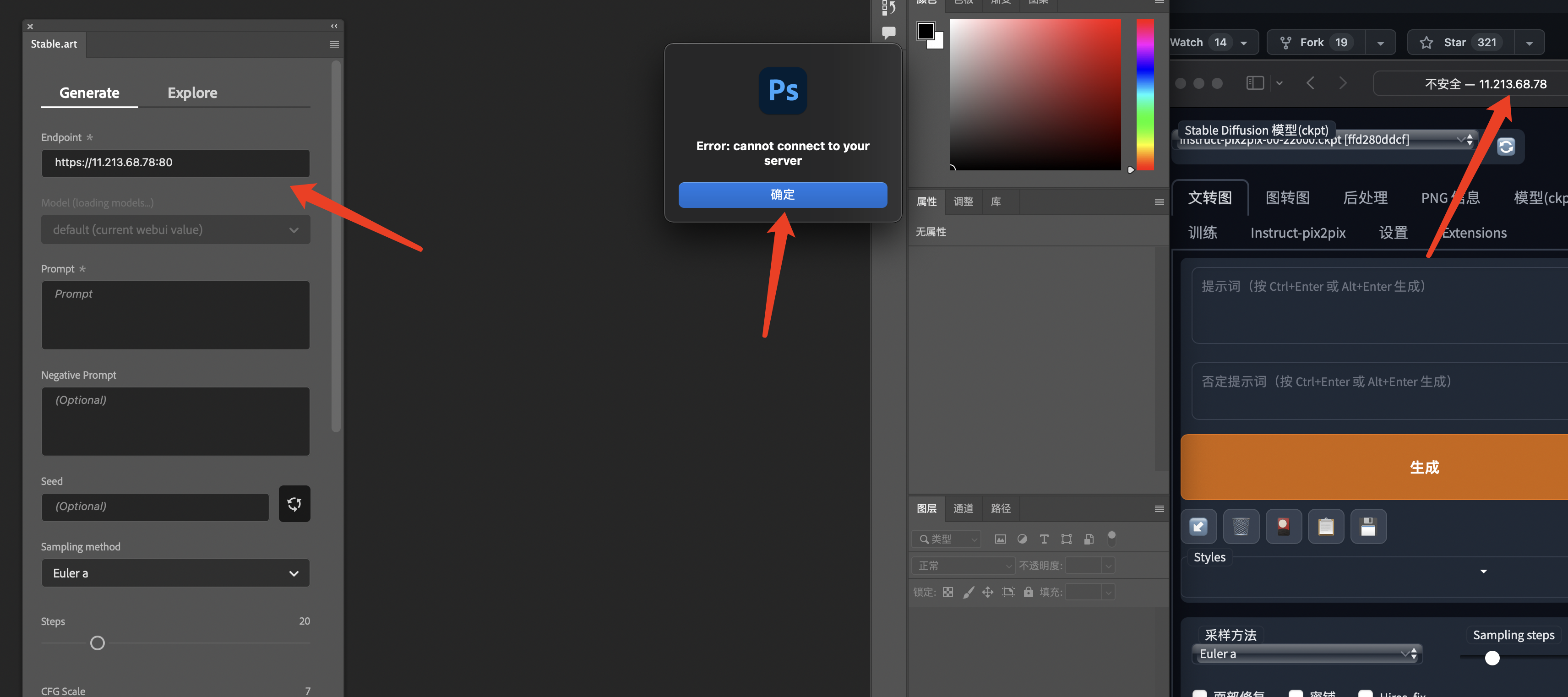

The error happens when trying to inpaint. The resolution of the render was 400x600 px at a batch of 1 image. The Plugin spikes in vRam and then shoots out this error. The vRam then gets tied up and I have to close stable to dump it

Error code as follows

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio\streams\memory.py", line 94, in receive

return self.receive_nowait()

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio\streams\memory.py", line 89, in receive_nowait

raise WouldBlock

anyio.WouldBlock

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 77, in call_next

message = await recv_stream.receive()

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio\streams\memory.py", line 114, in receive

raise EndOfStream

anyio.EndOfStream

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\uvicorn\protocols\http\h11_impl.py", line 407, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\uvicorn\middleware\proxy_headers.py", line 78, in call

return await self.app(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\fastapi\applications.py", line 270, in call

await super().call(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\applications.py", line 124, in call

await self.middleware_stack(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\errors.py", line 184, in call

raise exc

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\errors.py", line 162, in call

await self.app(scope, receive, _send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 106, in call

response = await self.dispatch_func(request, call_next)

File "C:\ai\stable-diffusion-webui\extensions\auto-sd-paint-ext\backend\app.py", line 383, in app_encryption_middleware

res: StreamingResponse = await call_next(req)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 80, in call_next

raise app_exc

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 69, in coro

await self.app(scope, receive_or_disconnect, send_no_error)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 106, in call

response = await self.dispatch_func(request, call_next)

File "C:\ai\stable-diffusion-webui\modules\api\api.py", line 80, in log_and_time

res: Response = await call_next(req)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 80, in call_next

raise app_exc

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\base.py", line 69, in coro

await self.app(scope, receive_or_disconnect, send_no_error)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\gzip.py", line 26, in call

await self.app(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\exceptions.py", line 79, in call

raise exc

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\middleware\exceptions.py", line 68, in call

await self.app(scope, receive, sender)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\fastapi\middleware\asyncexitstack.py", line 21, in call

raise e

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\fastapi\middleware\asyncexitstack.py", line 18, in call

await self.app(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\routing.py", line 706, in call

await route.handle(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\routing.py", line 276, in handle

await self.app(scope, receive, send)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\routing.py", line 66, in app

response = await func(request)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\fastapi\routing.py", line 235, in app

raw_response = await run_endpoint_function(

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\fastapi\routing.py", line 163, in run_endpoint_function

return await run_in_threadpool(dependant.call, **values)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\starlette\concurrency.py", line 41, in run_in_threadpool

return await anyio.to_thread.run_sync(func, *args)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio\to_thread.py", line 31, in run_sync

return await get_asynclib().run_sync_in_worker_thread(

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio_backends_asyncio.py", line 937, in run_sync_in_worker_thread

return await future

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\anyio_backends_asyncio.py", line 867, in run

result = context.run(func, *args)

File "C:\ai\stable-diffusion-webui\modules\api\api.py", line 230, in img2imgapi

processed = process_images(p)

File "C:\ai\stable-diffusion-webui\modules\processing.py", line 476, in process_images

res = process_images_inner(p)

File "C:\ai\stable-diffusion-webui\modules\processing.py", line 569, in process_images_inner

p.init(p.all_prompts, p.all_seeds, p.all_subseeds)

File "C:\ai\stable-diffusion-webui\modules\processing.py", line 993, in init

self.init_latent = self.sd_model.get_first_stage_encoding(self.sd_model.encode_first_stage(image))

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\autograd\grad_mode.py", line 27, in decorate_context

return func(*args, **kwargs)

File "C:\ai\stable-diffusion-webui\repositories\stable-diffusion-stability-ai\ldm\models\diffusion\ddpm.py", line 830, in encode_first_stage

return self.first_stage_model.encode(x)

File "C:\ai\stable-diffusion-webui\repositories\stable-diffusion-stability-ai\ldm\models\autoencoder.py", line 83, in encode

h = self.encoder(x)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\nn\modules\module.py", line 1130, in _call_impl

return forward_call(*input, **kwargs)

File "C:\ai\stable-diffusion-webui\repositories\stable-diffusion-stability-ai\ldm\modules\diffusionmodules\model.py", line 526, in forward

h = self.down[i_level].block[i_block](hs[-1], temb)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\nn\modules\module.py", line 1130, in _call_impl

return forward_call(*input, **kwargs)

File "C:\ai\stable-diffusion-webui\repositories\stable-diffusion-stability-ai\ldm\modules\diffusionmodules\model.py", line 131, in forward

h = self.norm1(h)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\nn\modules\module.py", line 1130, in _call_impl

return forward_call(*input, **kwargs)

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\nn\modules\normalization.py", line 272, in forward

return F.group_norm(

File "C:\ai\stable-diffusion-webui\venv\lib\site-packages\torch\nn\functional.py", line 2516, in group_norm

return torch.group_norm(input, num_groups, weight, bias, eps, torch.backends.cudnn.enabled)

RuntimeError: CUDA out of memory. Tried to allocate 14.41 GiB (GPU 0; 24.00 GiB total capacity; 9.57 GiB already allocated; 11.88 GiB free; 9.58 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF