kNN-box is an open-source toolkit to build kNN-MT models. We take inspiration from the code of kNN-LM and adaptive kNN-MT, and develope this more extensible toolkit based on fairseq. Via kNN-box, users can easily implement different kNN-MT baseline models and further develope new models.

- 🎯 easy-to-use: a few lines of code to deploy a kNN-MT model

- 🔭 research-oriented: provide implementations of various papers

- 🏗️ extensible: easy to develope new kNN-MT models with our toolkit.

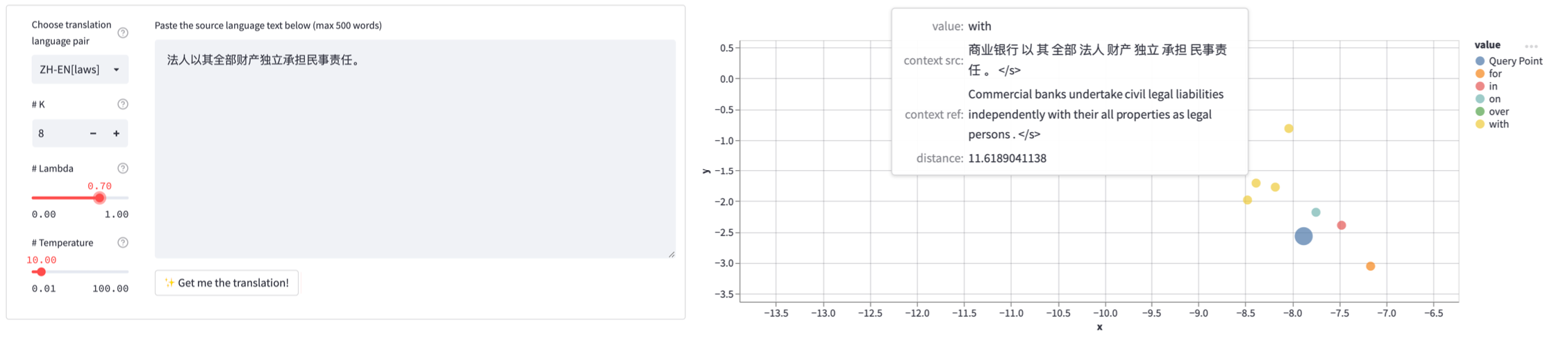

- 📊visualized: the whole translation process of the kNN-MT can be visualized

- python >= 3.7

- pytorch >= 1.10.0

- faiss-gpu >= 1.7.3

- sacremoses == 0.0.41

- sacrebleu == 1.5.1

- fastBPE == 0.1.0

- streamlit >= 1.13.0

- scikit-learn >= 1.0.2

- seaborn >= 0.12.1

You can install this toolkit by

git clone [email protected]:NJUNLP/knn-box.git

cd knn-box

pip install --editable ./Note: Installing faiss with pip is not suggested. For stability, we recommand you to install faiss with conda

CPU version only:

conda install faiss-cpu -c pytorch

GPU version:

conda install faiss-gpu -c pytorch # For CUDABasically, there are two steps for runing a kNN-MT model: building datastore and translating with datastore. In this toolkit, we unify different kNN-MT variants into a single framework, albeit they manipulate datastore in different ways. Specifically, the framework consists of three modules (basic class):

- datastore: save translation knowledge in key-values pairs

- retriever: retrieve useful translation knowledge from the datastore

- combiner: produce final prediction based on retrieval results and NMT model

Users can easily develope different kNN-MT models by customizing three modules. This toolkit also provide example implementations of various popular kNN-MT models (listed below) and push-button scripts to run them, enabling researchers conveniently reproducing their experiment results:

- Nearest Neighbor Machine Translation (Khandelwal et al., 2021)

- Adaptive Nearest Neighbor Machine Translation (Zheng et al., 2021)

- Learning Kernel-Smoothed Machine Translation with Retrieved Examples (Jiang et al., 2021)

- Efficient Machine Translation Domain Adaptation (PH Martins et al., 2022)

Preparation: download pretrained models and dataset

You can prepare pretrained models and dataset by executing the following command:

cd knnbox-scripts

bash prepare_dataset_and_model.shuse bash instead of sh. If you still have problem running the script, you can manually download the wmt19 de-en single model and multi-domain de-en dataset, and put them into correct directory (you can refer to the path in the script).

Run base neural machine translation model (our baseline)

To translate using base neural model, execute the following command:cd knnbox-scripts/base-nmt

bash inference.shRun vanilla knn-mt

To translate using knn-mt, execute the following command:

cd knnbox-scripts/vanilla-knn-mt

# step 1. build datastore

bash build_datastore.sh

# step 2. inference

bash inference.shRun adaptive knn-mt

To translate using adaptive knn-mt, execute the following command:

cd knnbox-scripts/adaptive-knn-mt

# step 1. build datastore

bash build_datastore.sh

# step 2. train meta-k network

bash train_metak.sh

# step 3. inference

bash inference.shRun kernel smoothed knn-mt

To translate using kernel smoothed knn-mt, execute the following command:

cd knnbox-scripts/kernel-smoothed-knn-mt

# step 1. build datastore

bash build_datastore.sh

# step 2. train kster network

bash train_kster.sh

# step 3. inferece

bash inference.shRun greedy merge knn-mt

implementation of Efficient Machine Translation Domain Adaptation (PH Martins et al., 2022)

To translate using Greedy Merge knn-mt, execute the following command:

cd knnbox-scripts/greedy-merge-knn-mt

# step 1. build datastore and prune using greedy merge method

bash build_datastore_and_prune.sh

# step 2. inferece (You can decide whether to use cache by --enable-cache)

bash inference.shWith kNN-box, you can even visualize the whole translation process of your kNN-MT model. You can launch the visualization service by running the following commands. Have fun with it!

cd knnbox-scripts/vanilla-knn-mt-visual

# step 1. build datastore for visualization (save more information for visualization)

bash build_datastore_visual.sh

# step 2. configure the model that you are going to visualize

vim model_configs.yml

# step 3. launch the web page

bash start_app.shHere are the results obtained by using the knnbox toolkit to reproduce popular papers.

we use the script mentioned above to download preprocessed OPUS multi-domain De-En dataset and pretrained WMT19 De-En winner model. If you are interested in the general domain dataset for training pretrained models, download it from here.

| Domain | IT | Medical | Koran | Law |

|---|---|---|---|---|

| Size | 3602862 | 6903141 | 524374 | 19061382 |

| model \ domain | IT | Medical | Koran | Law | General Domain (Avg) |

|---|---|---|---|---|---|

| Base NMT | 38.35 | 40.06 | 16.26 | 45.48 | 37.16 |

| vanilla kNN-MT | 45.88 | 54.13 | 20.51 | 61.09 | 11.98 |

| adaptive kNN-MT | 47.41 | 56.15 | 20.20 | 63.01 | 32.70 |

| kernel smoothed kNN-MT | 47.81 | 56.67 | 20.16 | 63.27 | 32.60 |

-

hyper-parameter of vanilla kNN-MT

we follow the configure of adaptive kNN-MT

domain IT Medical Koran Law K 8 4 16 4 Temperature 10 10 100 10 Lambda 0.7 0.8 0.8 0.8 -

hyper-parameter of adaptive kNN-MT

we choose the max-k which obtained highest BLEU for every domain in adaptive kNN-MT

Domain IT Medical Koran Law max-k 8 16 16 8 although

temperatureis trainable, we follow the papaer and fixtemperatureto 10. -

hyper-parameter of kernel smoothed kNN-MT

for all domains, we use fixed

k16.

Qianfeng Zhao: [email protected] Wenhao Zhu: [email protected]