- Perceptual loss of images generated by AutoEncoder

- Using the pre-trained AutoEncoder trained on ImageNet, encoded the images into embeddings on the latent space.

- Applied the vector difference of two images with different conditions like dry and wet, transparency, to the latent vector of the input image, and generated the new image

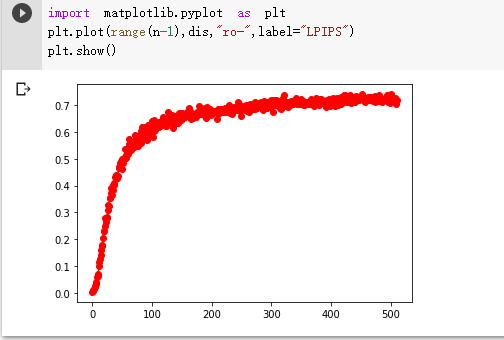

- Calculated the LPIPS(Learned Perceptual Image Patch Similarity) distance of images of the latent vectors added by different Gaussian noise, and compared with human evaluation

- Framework & Language: PyTorch, Python

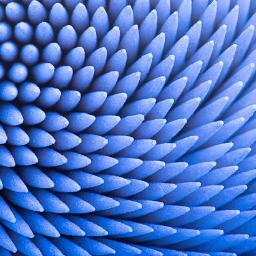

dry wet

Using the difference of the encoded latent vectors in the AutoEncoder, we could apply the condition into other images.

- Below is the generated images with wet condition.

dry wet

dry wet

- With different parameters

- reverse condition

- Recently, I'm studying the LPIPS distance (A perceptual metric of image similarity) of the generated image.

- Fancy geenrated image