airlab-unibas / airlab Goto Github PK

View Code? Open in Web Editor NEWImage registration laboratory for 2D and 3D image data

License: Apache License 2.0

Image registration laboratory for 2D and 3D image data

License: Apache License 2.0

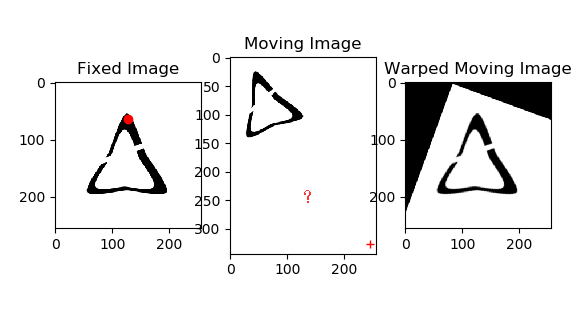

Im trying to get a point warped in moving image. something goes wrong. someone give me a hand? thx!

class SimilarityTransformation(RigidTransformation):

......

def _get_transformation_matrix(self):

self._compute_transformation()

return self._compute_transformation_matrix()

in file 'affine_registration_2d.py' i added:

matT = transformation._get_transformation_matrix().data.cpu().numpy()

matT = np.vstack((matT,[0,0,1]))# make matT 3*3

matT_inv = np.linalg.inv(matT)# inv(matT)

...

#draw point in moving image

px=128

py=64

plt.subplot(131)

plt.imshow(fixed_image.numpy(), cmap='gray')

plt.plot(px, py, 'ro')

plt.title('Fixed Image')

plt.subplot(132)

plt.imshow(moving_image.numpy(), cmap='gray')

pxM=(px/256*matT_inv[0][0]+py/256*matT_inv[0][1]+matT_inv[0][2])*256

pyM=(px/256*matT_inv[1][0]+py/256*matT_inv[1][1]+matT_inv[1][2])*256

plt.plot(pxM,pyM, 'r+')

plt.title('Moving Image')

I have been following the example code provided for demon registration and running into issues for the GPU usage.

Here, my device is only set to cuda:0.

print(device)

cuda:0

I am getting error in

registration.start()

~/.local/lib/python3.8/site-packages/airlab/registration/registration.py in start(self, EarlyStopping, StopPatience)

138 if self._verbose:

139 print(str(iter_index) + " ", end='', flush=True)

--> 140 loss = self._optimizer.step(self._closure)

141 if EarlyStopping:

142 if loss < self.loss:

~/.local/lib/python3.8/site-packages/torch/optim/optimizer.py in wrapper(*args, **kwargs)

87 profile_name = "Optimizer.step#{}.step".format(obj.__class__.__name__)

88 with torch.autograd.profiler.record_function(profile_name):

---> 89 return func(*args, **kwargs)

90 return wrapper

91

~/.local/lib/python3.8/site-packages/torch/autograd/grad_mode.py in decorate_context(*args, **kwargs)

25 def decorate_context(*args, **kwargs):

26 with self.__class__():

---> 27 return func(*args, **kwargs)

28 return cast(F, decorate_context)

29

~/.local/lib/python3.8/site-packages/torch/optim/adam.py in step(self, closure)

64 if closure is not None:

65 with torch.enable_grad():

---> 66 loss = closure()

67

68 for group in self.param_groups:

~/.local/lib/python3.8/site-packages/airlab/registration/registration.py in _closure(self)

100 loss_names = []

101 for image_loss in self._image_loss:

--> 102 lossList.append(image_loss(displacement))

103 loss_names.append(image_loss.name)

104

~/.local/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

887 result = self._slow_forward(*input, **kwargs)

888 else:

--> 889 result = self.forward(*input, **kwargs)

890 for hook in itertools.chain(

891 _global_forward_hooks.values(),

~/.local/lib/python3.8/site-packages/airlab/loss/pairwise.py in forward(self, displacement)

126

127 # compute displacement field

--> 128 displacement = self._grid + displacement

129

130 # compute current mask

RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cuda:0 and cpu!

May you please help solve this issue?

Thank you.

line 86 of IsotropicTVRegulariser's _regulariser_2d function in parameter.py is missing a :

It currently reads

(parameter[:, 1:, 1:] - parameter[:, -1, 1:]).pow(2)*self._scaling[0]when it should read

(parameter[:, 1:, 1:] - parameter[:, :-1, 1:]).pow(2)*self._scaling[0]The same typo appears in line 94 of _regulariser_3d. Note this is implemented correctly in the displacement regularizer class (e.g. see line 58).

You can check this using the following simple test case and the formula on page 3 from this paper where the answer should be 2 * sqrt(10)

X = np.array([[0, 1, 2], [3, 4, 5]])

T = torch.from_numpy(X).unsqueeze(0).float()

def current_incorrect(T):

dx = (T[:, 1:, 1:] - T[:, -1, 1:]).pow(2)

dy = (T[:, 1:, 1:] - T[:, 1:, :-1]).pow(2)

return torch.sqrt(dx + dy).sum()

def correct_isotropic_TV(T):

dx = (T[:, 1:, 1:] - T[:, :-1, 1:]).pow(2)

dy = (T[:, 1:, 1:] - T[:, 1:, :-1]).pow(2)

return torch.sqrt(dx + dy).sum()np.allclose(current_incorrect(T).data.numpy(), 2*np.sqrt(10))

Falsenp.allclose(correct_isotropic_TV(T).data.numpy(), 2*np.sqrt(10))

TrueI change the MSE loss to the MI loss in the affine_registration_3d.py example and get the error below. Any one know how to fix it.

(simpleitk) iridium-MacBook:airlab iridium$ python /Users/iridium/Desktop/airlab-master/examples/affine_registration_3d.py

tensor(-0.5714) tensor(-0.5714)

0 mi: 1.9073486328125e-06

1 mi: -1.9073486328125e-06

2 mi: -1.9073486328125e-06

3 mi: -0.0

4 mi: 1.9073486328125e-06

5 mi: -1.9073486328125e-06

6 mi: -1.9073486328125e-06

7 mi: -0.0

8 mi: -9.5367431640625e-07

9 mi: -0.0

10 mi: -0.0

11 mi: -1.9073486328125e-06

12 mi: -9.5367431640625e-07

13 mi: -0.0

14 mi: -0.0

15 mi: 9.5367431640625e-07

16 mi: -1.9073486328125e-06

17 mi: -0.0

18 mi: -0.0

19 mi: -1.9073486328125e-06

20 mi: -9.5367431640625e-07

21 mi: -9.5367431640625e-07

22 mi: -1.9073486328125e-06

23 mi: 9.5367431640625e-07

24 mi: -9.5367431640625e-07

25 mi: -9.5367431640625e-07

26 mi: -9.5367431640625e-07

27 mi: -9.5367431640625e-07

28 mi: -9.5367431640625e-07

29 mi: -9.5367431640625e-07

30 mi: -9.5367431640625e-07

31 mi: -0.0

32 mi: -9.5367431640625e-07

33 mi: -3.814697265625e-06

34 mi: -0.0

35 mi: 9.5367431640625e-07

36 mi: -0.0

37 mi: -0.0

38 mi: -9.5367431640625e-07

39 mi: -2.86102294921875e-06

40 mi: 9.5367431640625e-07

41 mi: -9.5367431640625e-07

42 mi: -1.9073486328125e-06

43 mi: -0.0

44 mi: -1.9073486328125e-06

45 mi: -0.0

46 mi: -9.5367431640625e-07

47 mi: -3.814697265625e-06

48 mi: -9.5367431640625e-07

49 mi: -0.0

50 mi: 9.5367431640625e-07

51 mi: -9.5367431640625e-07

52 mi: -9.5367431640625e-07

53 mi: -0.0

54 mi: -0.0

55 mi: -9.5367431640625e-07

56 mi: -0.0

57 mi: -9.5367431640625e-07

58 mi: -0.0

59 mi: -0.0

60 mi: -9.5367431640625e-07

61 mi: -1.9073486328125e-06

62 mi: -2.86102294921875e-06

63 mi: -9.5367431640625e-07

64 mi: -1.9073486328125e-06

65 mi: -0.0

66 mi: -0.0

67 mi: -9.5367431640625e-07

68 mi: -9.5367431640625e-07

69 mi: -0.0

70 mi: -0.0

71 mi: -1.9073486328125e-06

72 mi: -9.5367431640625e-07

73 mi: -9.5367431640625e-07

74 mi: -0.0

75 mi: -1.9073486328125e-06

76 mi: -0.0

77 mi: -9.5367431640625e-07

78 mi: -1.9073486328125e-06

79 mi: -9.5367431640625e-07

80 mi: -0.0

81 mi: -9.5367431640625e-07

82 mi: -0.0

83 mi: 9.5367431640625e-07

84 mi: -2.86102294921875e-06

85 mi: -0.0

86 mi: -1.9073486328125e-06

87 mi: -2.86102294921875e-06

88 mi: -0.0

89 mi: -1.9073486328125e-06

90 mi: -1.9073486328125e-06

91 mi: -1.9073486328125e-06

92 mi: 9.5367431640625e-07

93 mi: -2.86102294921875e-06

94 mi: -1.9073486328125e-06

95 mi: 9.5367431640625e-07

96 mi: -1.9073486328125e-06

97 mi: -0.0

98 mi: -0.0

99 mi: -2.86102294921875e-06

100 mi: -9.5367431640625e-07

101 mi: -0.0

102 mi: -0.0

103 mi: -0.0

104 mi: -9.5367431640625e-07

105 mi: 9.5367431640625e-07

106 mi: 9.5367431640625e-07

107 mi: -0.0

108 mi: -0.0

109 mi: -0.0

110 mi: -0.0

111 mi: -0.0

112 mi: -9.5367431640625e-07

113 mi: 9.5367431640625e-07

114 mi: -9.5367431640625e-07

115 mi: -9.5367431640625e-07

116 mi: -9.5367431640625e-07

117 mi: -0.0

118 mi: -9.5367431640625e-07

119 mi: 1.9073486328125e-06

120 mi: -9.5367431640625e-07

121 mi: 9.5367431640625e-07

122 mi: 9.5367431640625e-07

123 mi: 1.9073486328125e-06

124 mi: -0.0

125 mi: -0.0

126 mi: -0.0

127 mi: -0.0

128 mi: -0.0

129 mi: -9.5367431640625e-07

130 mi: 9.5367431640625e-07

131 mi: -1.9073486328125e-06

132 mi: -9.5367431640625e-07

133 mi: -9.5367431640625e-07

134 mi: -2.86102294921875e-06

135 mi: -0.0

136 mi: 9.5367431640625e-07

137 mi: -9.5367431640625e-07

138 mi: -0.0

139 mi: -9.5367431640625e-07

140 mi: 1.9073486328125e-06

141 mi: 1.9073486328125e-06

142 mi: -1.9073486328125e-06

143 mi: -9.5367431640625e-07

144 mi: 9.5367431640625e-07

145 mi: -0.0

146 mi: -9.5367431640625e-07

147 mi: -0.0

148 mi: -9.5367431640625e-07

149 mi: -1.9073486328125e-06

150 mi: -0.0

151 mi: -0.0

152 mi: -0.0

153 mi: 9.5367431640625e-07

154 mi: -0.0

155 mi: -0.0

156 mi: -9.5367431640625e-07

157 mi: 9.5367431640625e-07

158 mi: -9.5367431640625e-07

159 mi: 9.5367431640625e-07

160 mi: -9.5367431640625e-07

161 Traceback (most recent call last):

File "/Users/iridium/Desktop/airlab-master/examples/affine_registration_3d.py", line 109, in <module>

main()

File "/Users/iridium/Desktop/airlab-master/examples/affine_registration_3d.py", line 71, in main

registration.start()

File "/Users/iridium/anaconda3/envs/simpleitk/lib/python3.6/site-packages/airlab-0.2.1-py3.6.egg/airlab/registration/registration.py", line 140, in start

File "/Users/iridium/anaconda3/envs/simpleitk/lib/python3.6/site-packages/torch/optim/adam.py", line 58, in step

loss = closure()

File "/Users/iridium/anaconda3/envs/simpleitk/lib/python3.6/site-packages/airlab-0.2.1-py3.6.egg/airlab/registration/registration.py", line 102, in _closure

File "/Users/iridium/anaconda3/envs/simpleitk/lib/python3.6/site-packages/torch/nn/modules/module.py", line 493, in __call__

result = self.forward(*input, **kwargs)

File "/Users/iridium/anaconda3/envs/simpleitk/lib/python3.6/site-packages/airlab-0.2.1-py3.6.egg/airlab/loss/pairwise.py", line 381, in forward

RuntimeError: invalid argument 8: lda should be at least max(1, 64), but have 1 at /Users/distiller/project/conda/conda-bld/pytorch_1556653492823/work/aten/src/TH/generic/THBlas.cpp:363Its actually not an issue, rather I have a question.

I'm trying to use BsplineTransformation for Random Elastic Deformation.

For the same, I would like to set custom parameters for the BSpline transformation.

Can someone recomand some way for doing the same?

Thanks.

This step computing the joint distribution in MI pairwise loss:

airlab/airlab/loss/pairwise.py

Line 381 in 80c9d48

The marginal distributions are already normalised by self._normalizer_1d in:

airlab/airlab/loss/pairwise.py

Line 339 in 80c9d48

I might be mistaken. But doesn't the joint distribution no longer need normalisation (self._normalizer_2d) as its the outer product of the normalised marginal distributions?

Thank you very much for developing this code.

I would like to registration of two CT dicom sets (3D).

I need a guide about the data format.

It would be very helpful if you can provide me a sample code to import 3D CT dicom files as a fixed image and a moving image.

Can you be a bit more transparent about how you calculate the mutual information loss? Particularly H. How did you guys calculate the joint histogram?

Was airlab tested with pytorch 1.0.1? I'm getting an error in registration code.

It works with pytorch 0.4.1

registration.start()

Fairlab/registration/registration.py", line 203, in start

regulariser.regularise(self._transformation.parameters())

airlab/regulariser/demons.py", line 80, in regularise

self._regulariser(parameter)

airlab/regulariser/demons.py", line 65, in _regularise_2d

data.data.unsqueeze_(0)

RuntimeError: set_storage_offset is not allowed on Tensor created from .data or .detach()

Thanks

Hi! I'm studying the source code. I find the method

def bspline_kernel_3d(sigma=[1, 1, 1], order=2, asTensor=False, dtype=th.float32, device='cpu'):

kernel_ones = th.ones(1, 1, *sigma)

kernel = kernel_ones

padding = np.array(sigma) - 1

for i in range(1, order + 1):

kernel = F.conv3d(kernel, kernel_ones, padding=(padding).tolist())/(sigma[0]*sigma[1]*sigma[2])

if asTensor:

return kernel[0, 0, ...].to(dtype=dtype, device=device)

else:

return kernel[0, 0, ...].numpy()I know we can use the control points to control the displacements. I've also read about other

bspline implementations. The airlab bspline method looks like very simple. So I want to know how does it work, the meaning of sigma and why the for loop can get the bspline kernel.

Thanks! :)

Hello,

Can you provide an example for working with RGB images?

Thanks

When I create a new registration instance, the parameter "verbose" is rejected because it doesn't show up in the class definition. Example:

registration = al.PairwiseRegistration(dtype=fixed_image.dtype,

device=fixed_image.device, verbose=True)

yields

TypeError: __init__() got an unexpected keyword argument 'verbose'

However, I can manually set it after defining the instance with

registration._verbose=verbose

Figured you would want to know about this.

I have marked some landmarks in images and applied affine transformation on images.

And I want to get the corresponding landmark coordinate in transformed images.

What should I do?

I test the AffineTransformation.get_inverse_displacement() but a TypeError is shown.

I was going through the code of BSplineTransformation. I was trying to relate is to this paper as stated in airlab's paper. However, I am not able to relate how these two are similar or perform a similar kind of transformation. Also, I need to transform points to get new points as in SimpleITK transform method. Is it possible in airlab?

Is there a way to recover the transformation matrix at the end of the registration process?

I have two images, each larger than my entire RAM. Is there any possibility to use AIRLAB to register them?

Best,

Nima

Dear professor,Thank you for your contribution about image registration. I face a problem when i do some experiments on medical image via examples/affine_registration_2d.py.

Left is fixed CT image, middle is moving fixed image and right is registration result. It's perfect to apply to png format from above figure. But it seems doesn't work for dicom format(pydicom read manner via python):

I want to compute where the point (x,y) in the image is mapped to after applying the transformation. How can this be done in airlab?

Hi!

I am having an issue applying the displacements of an affine transformation in an image which has not a square size e.g. [233, 197]. What I noticed is that the result looks scaled compared to what I was expecting. I am generating the transformation using an image of the same size.

Could this be a bug or am I not using the library the way it is meant to be used?

Thanks,

Vasiliki

my code:

displacement = transformation.get_displacement()

warped_image = al.transformation.utils.warp_image(moving_image, displacement)

polybox = [[654, 260], [654, 290], [700, 290], [700, 260]]

warped_polybox = al.Points.transform(np.array(polybox).reshape([-1, 2]), displacement)

displacement = al.create_displacement_image_from_image(displacement, moving_image)

error:

Traceback (most recent call last):

File "/Users/yangxue/Desktop/yangxue/code/airlab/examples/demo.py", line 151, in <module>

main()

File "/Users/yangxue/Desktop/yangxue/code/airlab/examples/demo.py", line 99, in main

warped_polybox = al.Points.transform(np.array(polybox).reshape([-1, 2]), displacement)

File "/Users/yangxue/Desktop/yangxue/code/airlab/airlab/utils/points.py", line 105, in transform

raise Exception("Datatype of displacement field not supported.")

Exception: Datatype of displacement field not supported.

So I used the wrong displacement. How do I get the correct displacement? Can you give an example?

Line 317 in 1b92e99

Hi,

I've discovered that the registration doesn't work if the images are preliminarily normalized by mean-std. MSE loss immediately falls to nan, NCC - to 0, and they do not ever change.

I'm not sure how you see the intended use of the library, but I'd say that working with mean-std normalized images is quite common.

To reproduce, in https://github.com/airlab-unibas/airlab/blob/master/examples/affine_registration_3d.py change https://github.com/airlab-unibas/airlab/blob/master/examples/affine_registration_3d.py#L41-L43 to

fixed_image = th.zeros(64, 64, 64).to(device=device)

fixed_image[16:32, 16:32, 16:32] = 1.0

fixed_image = fixed_image - fixed_image.mean()

fixed_image = fixed_image / fixed_image.std()

fixed_image = al.Image(fixed_image, [64, 64, 64], [1, 1, 1], [0, 0, 0])

and https://github.com/airlab-unibas/airlab/blob/master/examples/affine_registration_3d.py#L45-L48 to

moving_image = th.zeros(64, 64, 64).to(device=device)

moving_image[16 - object_shift:32 - object_shift, 16 - object_shift:32 - object_shift,

16 - object_shift:32 - object_shift] = 1.0

moving_image = moving_image - moving_image.mean()

moving_image = moving_image / moving_image.std()

moving_image = al.Image(moving_image, [64, 64, 64], [1, 1, 1], [0, 0, 0])

Is there a way to calculate the determinant of the Jacobian to see whether the used B-spline transformation is volume preserving? What alternative methods can I use to ensure that there is no shrinkage?

Suppose someone has a bunch of x-rays taken at the dental office. Each tooth appears in 2-3 x-rays. What is a good method to register each pair of x-ray images?

Hi guys, at least in python 3.7 and torch 1.1.0, EarlyStopping causes the registration.start() of a PairwiseTransformation to break, with the error:

~\AppData\Local\Continuum\anaconda3\lib\site-packages\airlab-0.2.1-py3.7.egg\airlab\registration\registration.py in start(self, EarlyStopping, StopPatience)

143 n = 0

144 self.loss=loss

--> 145 best=deepcopy(self._transformation)

146 else:

147 n += 1

~\AppData\Local\Continuum\anaconda3\lib\copy.py in deepcopy(x, memo, _nil)

178 y = x

179 else:

--> 180 y = _reconstruct(x, memo, *rv)

181

182 # If is its own copy, don't memoize.

~\AppData\Local\Continuum\anaconda3\lib\copy.py in _reconstruct(x, memo, func, args, state, listiter, dictiter, deepcopy)

278 if state is not None:

279 if deep:

--> 280 state = deepcopy(state, memo)

281 if hasattr(y, '__setstate__'):

282 y.__setstate__(state)

~\AppData\Local\Continuum\anaconda3\lib\copy.py in deepcopy(x, memo, _nil)

148 copier = _deepcopy_dispatch.get(cls)

149 if copier:

--> 150 y = copier(x, memo)

151 else:

152 try:

~\AppData\Local\Continuum\anaconda3\lib\copy.py in _deepcopy_dict(x, memo, deepcopy)

238 memo[id(x)] = y

239 for key, value in x.items():

--> 240 y[deepcopy(key, memo)] = deepcopy(value, memo)

241 return y

242 d[dict] = _deepcopy_dict

~\AppData\Local\Continuum\anaconda3\lib\copy.py in deepcopy(x, memo, _nil)

159 copier = getattr(x, "__deepcopy__", None)

160 if copier:

--> 161 y = copier(memo)

162 else:

163 reductor = dispatch_table.get(cls)

~\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\tensor.py in __deepcopy__(self, memo)

21 def __deepcopy__(self, memo):

22 if not self.is_leaf:

---> 23 raise RuntimeError("Only Tensors created explicitly by the user "

24 "(graph leaves) support the deepcopy protocol at the moment")

25 if id(self) in memo:

RuntimeError: Only Tensors created explicitly by the user (graph leaves) support the deepcopy protocol at the moment

Makes sense since deepcopy can't copy torch tensors. I think a solution is in the PairwiseRegistration class, by changing the line

best=deepcopy(self._transformation)

to just extract the displacement tensor, and then when early stopping is reached, replacing the displacement tensor in the self._transformation class with best. The problem is that I can extract the tensor out of the PairwiseRegistration class (e.g. by detach()) but I can't for the life of me figure out how to replace it. I'm hoping you might know the trick?

I am trying to do some Rigid Registration on binary images of size 64x64 and I was playing around with different loss functions. But while trying to use both LCC and SSIM loss functions, I got following error:

RuntimeError: Subtraction, the - operator, with a bool tensor is not supported. If you are trying to invert a mask, use the ~ or logical_not() operator instead.

I am not quite sure what this error entails, could anyone assist me here?

Hi,

Thank you so much for sharing such brilliant code!

But I am confused about the implementation of kernel transform. The exact code is shown as below.

`

def _compute_flow_3d(self):

# compute dense displacement

displacement = F.conv_transpose3d(self.trans_parameters, self._kernel,

padding=self._padding, stride=self._stride, groups=3)

# crop displacement

return th.squeeze(displacement[:, :, self._stride[0] + self._crop_start[0]:-self._stride[0] - self._crop_end[0],

self._stride[1] + self._crop_start[1]:-self._stride[1] - self._crop_end[1],

self._stride[2] + self._crop_start[2]:-self._stride[2] - self._crop_end[2]

].transpose_(1,4).transpose_(1,3).transpose_(1,2))

`

I found on the Internet that transposed convolution doesn’t reverse the standard convolution by values, rather by dimensions only.

And I have also read other bspline implementations like https://github.com/C4IR/FAIR.m/blob/master/kernel/transformations/splineTransformation2D.m.

They get spline coefficients and then compute the displacement.

I am wondering if your implementation has some theories behind.

Thank you very much if you could answer my questions!

If you are willing to find the warp/displacement on a grayscale version of the image, but want to apply it to all 3 layers in the color version, then here is a simple hack to warp_image():

def warp_image(image, displacement):

image_size = image.size

grid = compute_grid(image_size[:2], dtype=image.dtype, device=image.device)

out=image_from_numpy(np.empty(image_size),(),(),device=image.device,dtype=image.dtype)

if len(image_size==2):

out.image = transformation.utils.F.grid_sample(image.image, displacement + grid)

else:

for i in range(image_size[-1]):

out.image[...,i] = transformation.utils.F.grid_sample(image.image[...,i], displacement + grid)

return out

Hi,

In the following function, how do you handle the non-square images? I think the magnitude of displacement vectors should be tuned according to the ratio of image length and width.

airlab/airlab/transformation/pairwise.py

Lines 89 to 105 in 051f936

Hi,

When I run the 'kernel_registration_3d.py' example I get the following output:

loading images

initial TRE: 14.044497041673539

preprocessing images

Segmentation fault (core dumped)

Can we use float16 using this framework? Has there been consideration for allowing integration with nvidia apex: https://github.com/NVIDIA/apex

Thanks!

Dear Developer,

Thank you very much for developing this code.

I would like to try a registration of two CTs (3D dicom format).

I need a guide how to handle the data format.

It would be very helpful if you can provide me a sample code to import 3D CT dicom files as a fixed image and a moving image to apply airlab code.

Thank you in advance.

While it is helpful to have the torch optimizer return the loss as it iterates, I think it would be VERY helpful to provide a .loss() function to the registration class, so that the user can print out the current loss value once the registration is finished. Seems like it could be an easy call to the self._optimizer?

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.