Comments (16)

@yhenon Thanks for your project. Great work!

I wonder why SGD doesn't work as the original paper said? Adam works well.

Do you have any ideas?

from pytorch-retinanet.

I have the same issue here.

Looking for coco pre-trained model too.

Thanks.

from pytorch-retinanet.

Hi,

I'm glad to hear you find this repo useful. I haven't shared weights yet as it's not quite finalized. However, I do have weights that I should be able to share later today.

@sunset1995 the regression targets are normalized slightly differently in this repo VS the keras one. This one uses (0.1,0.1,0.2,0.2), whereas the keras one uses (0.2,0.2,0.2,0.2). So the weights for the final layer of the regression branch have to be scaled appropriately.

from pytorch-retinanet.

@yhenon thanks~

I also found keras version regression output is to shift x1, y1, x2, y2 instead of x, y, w, h. The visualization from few samples look reasonable now after this fix.

However, I still more prefer to use your weight to keep code cleaner.

Thanks again~

from pytorch-retinanet.

from pytorch-retinanet.

@yhenon I will open a new branch for it in this weekend.

Also, our team is waiting your pretrained weight :)

from pytorch-retinanet.

from pytorch-retinanet.

The results from few samples look prefect! (better than my keras version, so some fix is needed for my keras conversed model)

btw, it seem that coco_resnet_50_map_0_335.pt is saved from python2

I have to use below trick to load it from python3

from functools import partial

import pickle

pickle.load = partial(pickle.load, encoding="latin1")

pickle.Unpickler = partial(pickle.Unpickler, encoding="latin1")

retinanet = torch.load(parser.model, map_location=lambda storage, loc: storage, pickle_module=pickle)from pytorch-retinanet.

I see this line:

anchors_nms_idx = nms(torch.cat([transformed_anchors, scores], dim=2)[0, :, :], 0.5)

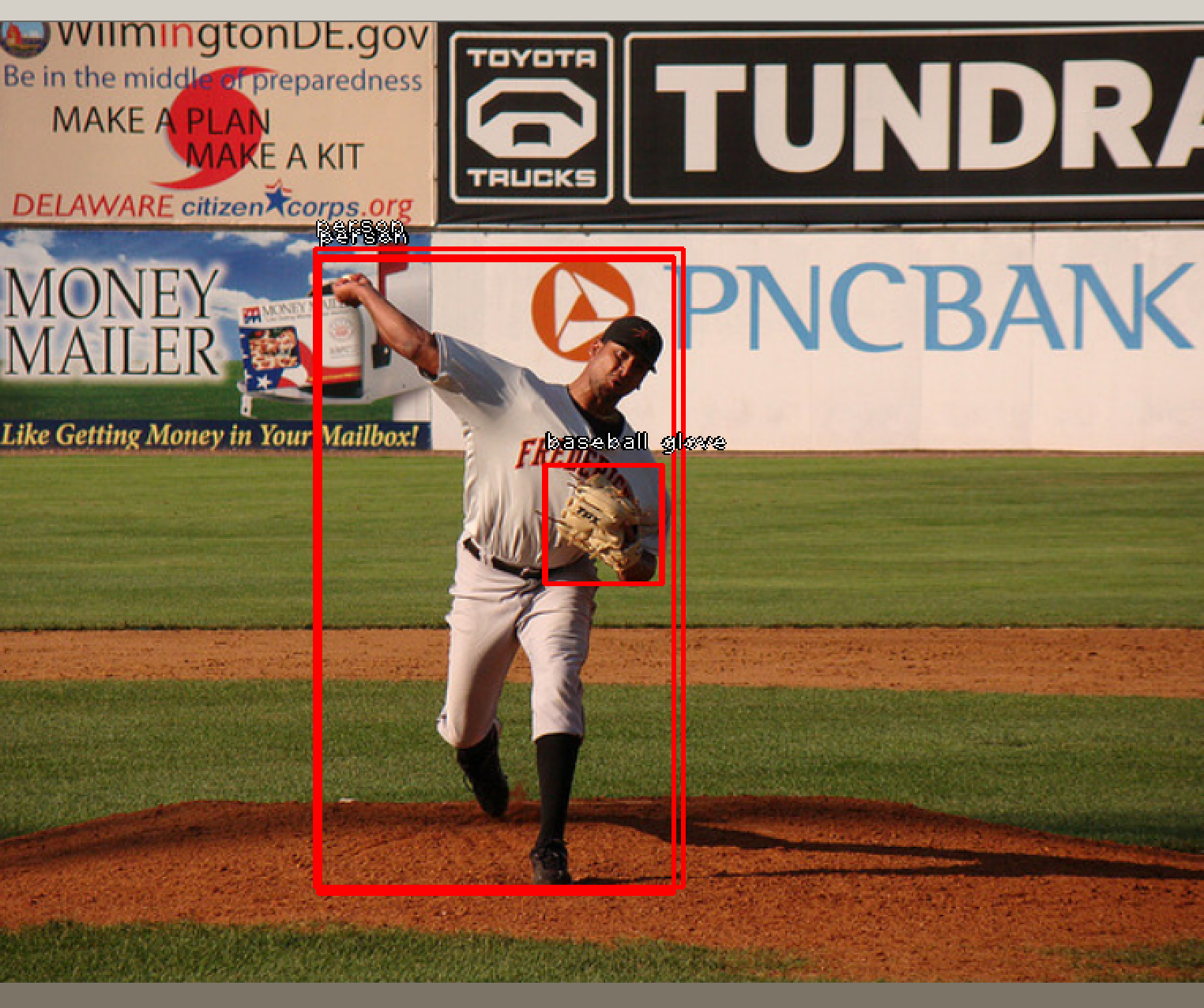

) that should apply nms to prediction detections too, but i get results like this:

.

.

Do you have any idea why this is happening?

from pytorch-retinanet.

I've uploaded a model that has been serialized differently: https://drive.google.com/open?id=1yLmjq3JtXi841yXWBxst0coAgR26MNBS

You should be able to load it with:

retinanet = model.resnet50(num_classes=dataset_train.num_classes(),)

retinanet.load_state_dict(torch.load(PATH))

@sunset1995 This should fix the issues between python2/3

@lvaleriu this may fix the problem you reported, I'd appreciate if you could test it out.

from pytorch-retinanet.

Same thing for me.. I get multiple anchors/detections for the same object

from pytorch-retinanet.

@yhenon hi, yhenon, could you explain a little bit that why you use (0.1,0.1,0.2,0.2) to normalize the regression targets but not use the original regression targets? It's because the regression loss is too small to be fully optimized? But in the original paper, it seems that the author doesn't normalize the regression targets, so I don't know if it's necessary.

Thanks.

from pytorch-retinanet.

What do you mean with original regression targets ? If you compute the COCO regression mean and std values you will more or less get the 0.1 0.1 0.2 0.2 that is used. The original paper doesnt mention regression normalization but it is safe to assume they do. Also, as far as I know, Detectron (which is the closest to an official implementation) uses these regression values as well.

from pytorch-retinanet.

@hgaiser for the original regression targets, i mean the targets in this line. And the purpose of doing regression normalization is to amplify the regression loss and to make it can be fully optimized, do i understand it correctly?

from pytorch-retinanet.

@hgaiser is correct, I'll just add a few things.

- the original source for doing this is the fast-rcnn paper, where they

state:

We normalize the ground-truth regression targets vi to have zero mean and unit variance. All experiments use λ = 1.

- these targets were computed over the whole COCO dataset.

the purpose of doing regression normalization is to amplify the regression

loss and to make it can be fully optimized, do i understand it correctly?

-

partly, it also ensures that the 2 parts of the regression loss is balanced

(the size and position) -

the main reason to do it is that it improves mAP a little.

from pytorch-retinanet.

@yhenon it's clear to me now, thanks for your explanation.

from pytorch-retinanet.

Related Issues (20)

- Loss curve

- How to specify GPU card number for training

- mAP Problem/question

- Question about NMS step in inference phase? HOT 1

- Question about input size HOT 1

- Possible discrepancy in FocalLoss calculation HOT 2

- Yolo format HOT 1

- multi-GPU training

- evaluate question

- Training Issue HOT 3

- Is batch testing possible? HOT 1

- Save module every time?

- Failure to switch to ONNX HOT 1

- IndexError: index -1 is out of bounds for axis 0 with size 0 HOT 3

- Early Stopping

- Alternative dataset

- i can't find the CSVGenerator,could you please tell me where is it? thank you

- Why is AP so low?(No changes were made. )

- Can we enhance retinanet model??

- Is there any training logs?

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from pytorch-retinanet.