Comments (11)

The error message looks like it's a tensorflow issue (not a JAX / Whisper JAX one)? Could you maybe first try uninstalling tensorflow:

pip uninstall tensorflow

conda remove tensorflow

And then re-running the code?

from whisper-jax.

Hey @BATspock - could you check that JAX is correctly installed? See comment #30 (comment)

from whisper-jax.

Hi @sanchit-gandhi, I do not believe this is a problem with the installations. I executed the script referred to in the comment above and got the following output:

Found 1 JAX devices of type NVIDIA GeForce RTX 3050 Ti Laptop GPU.

I am trying to run the basic starter code but still #getting the same error.

Starter code:

from whisper_jax import FlaxWhisperPipline

# instantiate pipeline

pipeline = FlaxWhisperPipline("openai/whisper-large-v2")

# JIT compile the forward call - slow, but we only do once

text = pipeline("audio.mp3")

# used cached function thereafter - super fast!!

text = pipeline("audio.mp3")

# print the text

print("Done")

from whisper-jax.

Any luck @BATspock? Happy to help make this work here!

from whisper-jax.

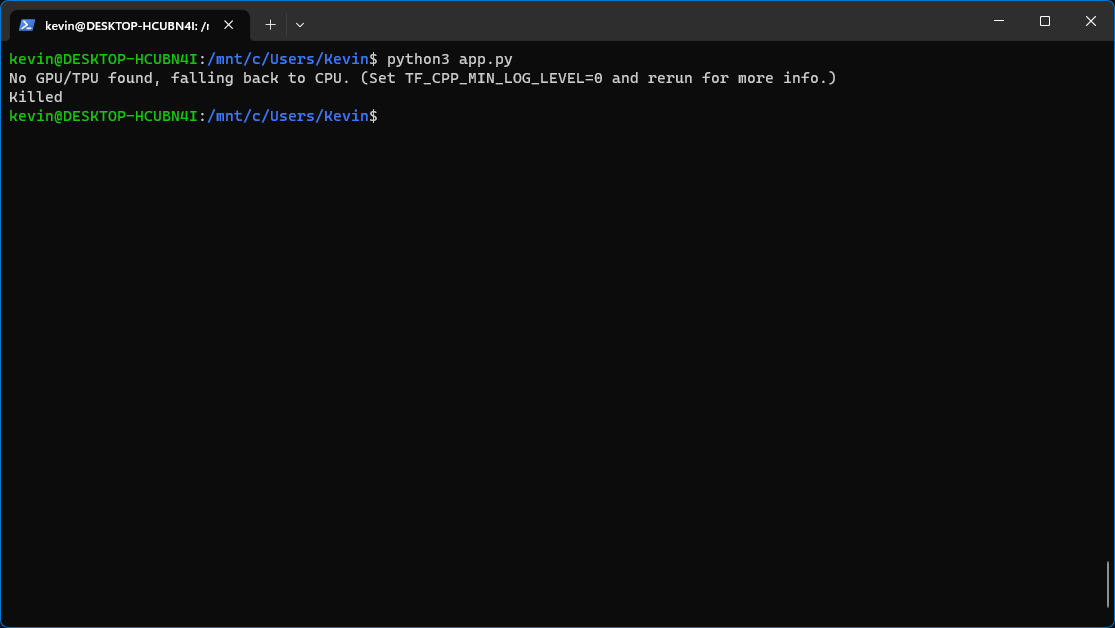

I was facing the same issue even after uninstalling and reinstalling TensorFlow. I tried to play around with my Nvidia drivers the, but now whisper-Jax is not detecting my GPU. However, I still see the same error of the process being killed without error.

python whisperJAX.py

No GPU/TPU found, falling back to CPU. (Set TF_CPP_MIN_LOG_LEVEL=0 and rerun for more info.)

Killed

from whisper-jax.

Same problem here. 😢

from whisper-jax.

Update. I decided to ask GPT-4 what's going on and it told me that it's because there's insufficient memory. I checked it and it turns out GPT-4 is right! I see the memory fill up aaaaaaaall the way to 16 GB and then the app gets killed.

In your case, what you'll have to solve is the problem that it does not detect your GPU somehow, because obviously you wouldn't need as much RAM if you use your 3050. It is only because it somehow does not want to cooperate with your GPU, that it falls back on CPU and then tries to load the model into your system's RAM instead of in your GPU's VRAM, which is apparently also insufficient like in my case.

I don't have a GPU so I'm now shit out of luck. You'll be in luck if you figure out how to get the GPU working with it! :) But at least now you know the reason why the app keeps on getting killed! :)

from whisper-jax.

I was facing the same issue even after uninstalling and reinstalling TensorFlow. I tried to play around with my Nvidia drivers the, but now whisper-Jax is not detecting my GPU. However, I still see the same error of the process being killed without error.

python whisperJAX.py No GPU/TPU found, falling back to CPU. (Set TF_CPP_MIN_LOG_LEVEL=0 and rerun for more info.) Killed

Still i would suggest to you CUDA greater than 12 !

For this i have wasted a lot of time it is the CUDA & jax incapability issues. If you have a fresh instance of only ubuntu this this steps

Install CUDA & Drivers

sudo apt-get install nvidia-driver-510-server

sudo restart

nvidia-smi

Install virtualenv

sudo apt-get update

sudo apt-get upgrade

apt install python3-virtualenv

Restart the instance after this !

Make a Venv & install dependencies

virtualenv -p python3 venv

source venv/bin/activate

pip install --upgrade "jax[cuda11_pip]" -f https://storage.googleapis.com/jax-releases/jax_cuda_releases.html

And then install whisper JAX. It works !

from whisper-jax.

What exactly do you mean by fresh instance of only ubuntu? Are you working with a cloud spawned image?

from whisper-jax.

Yeah on cloud, but running larger files it gets killed. I am also trying out diff things. If i get anything will surely share.

On thing i noticed from htop is that the CPU is at 100% even for 1 file, while memory is 4.7GB/ 8GB

from whisper-jax.

Okay so the issue here is that "pool" which is getting created using mulit-processing is not getting closed. So every time we run the code in a "Flask or Flask + Celery Server" the program gets killed

- New process are spawned (For ex we have kept the max NUM_PROC = 32 so new workers are started from 33)

- Old one are never closed and makes new memory allocations

I have tried with pool.close() & pool.terminate() to close the workers but it was not working, maybe i missing something.

But yeah this was the issue i have checked the RAM & CPU usage on the tiny model.

Temp Solution

- Comment out the mulit-processing lines !

from whisper-jax.

Related Issues (20)

- Is there some code for Whisper jax to produce srt subtitle? HOT 1

- How to add millisecond for the timestamp?

- I have downloaded the flax_model, where can I call it?

- why whisper-jax did not use my GPU? HOT 3

- Rust impl

- Unsuccessful deployment HOT 1

- Coral TPU support HOT 1

- Slower than openai whisper with my gpu HOT 2

- I want to use whisper-at models HOT 1

- Has translate be integrated into transcribe? It returns English but expect Chinese. HOT 3

- Slow post processing HOT 1

- unable to run TPU using current kaggle environment HOT 1

- Large Model causing performance degradation?

- Shape Error when running on GPU HOT 2

- HuggingFace space erroring more often than usual HOT 1

- Transcription issues.

- Punctuation mark

- Confidence score and average log probability on Whisper-JAX

- whisper-large-v3 (in demo code) VS whisper-large-v2 (in kaggle notebook)

- Add wrapper for wyoming API

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from whisper-jax.