Comments (14)

Hi! Please see: https://gist.github.com/dvdhfnr/732c26b61a0e63a0abc8a5d769dbebd0

Hi this link is failed, could you share a new one

Thanks.

from midas.

Hi!

Please see: https://gist.github.com/dvdhfnr/732c26b61a0e63a0abc8a5d769dbebd0

from midas.

@dvdhfnr I implemented the loss by myself. I found that the range of prediction is unstable. It can be -3 -- 8, -10 -- 30, -200 -- 1000, in differernt training attempts. I wonder if your met the problem when you trained the network. Thank you very much.

from midas.

No, I did not met this problem. Did you scale/shift the target depths to [0, 10], as described in the paper?

from midas.

@dvdhfnr I train my model on NYUDepthV2, and scale/shift the depth value by

mask = target > 0

target[mask] = (target[mask] - target[mask].min()) / (target[mask].max() - target[mask].min()) * 9 + 1

target[mask] = 10. / target[mask]

target[~mask] = 0.

How did you scale/shift the depth map?

from midas.

To be more precise:

"For all datasets, we shift and scale the ground-truth inverse depth to the range [0, 10]."

from midas.

I test the provided midas_loss. It also leads to unstable output range. The output range is influenced by the initial weights.

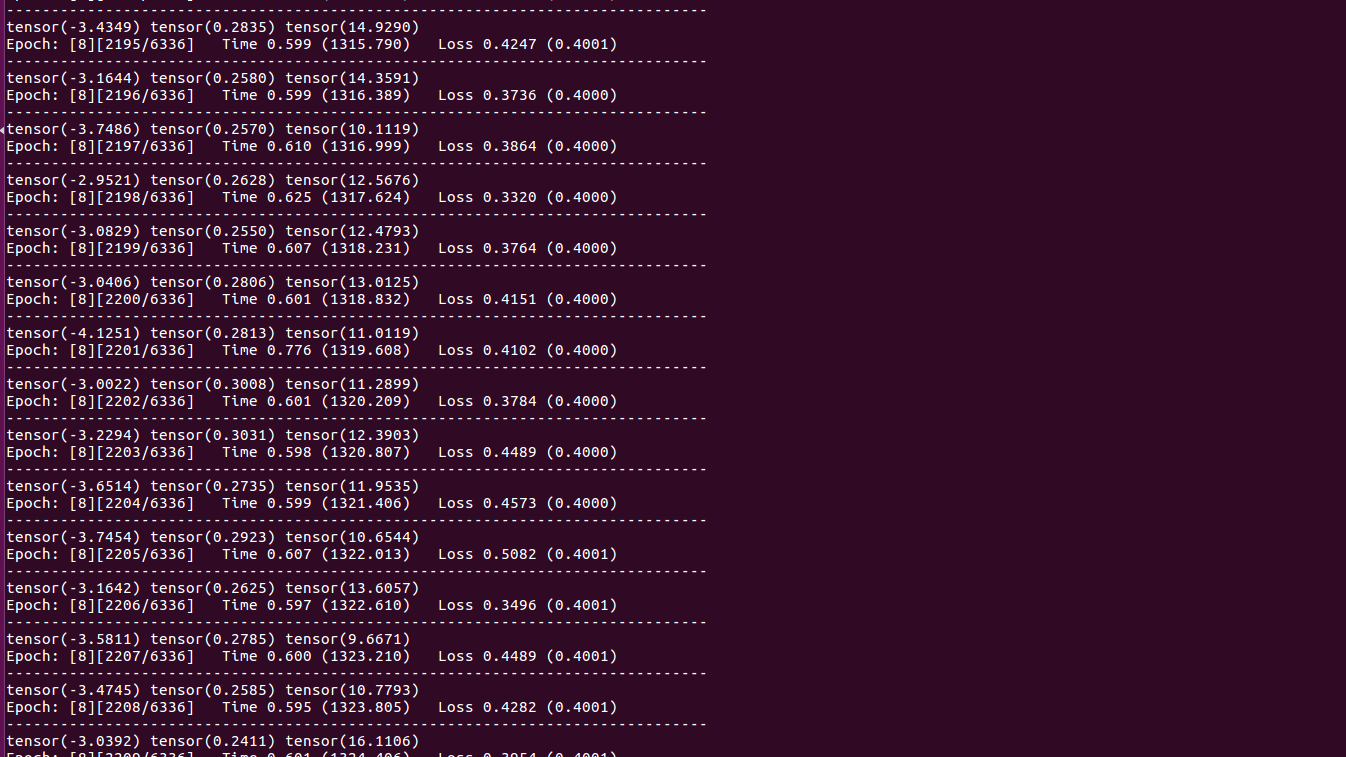

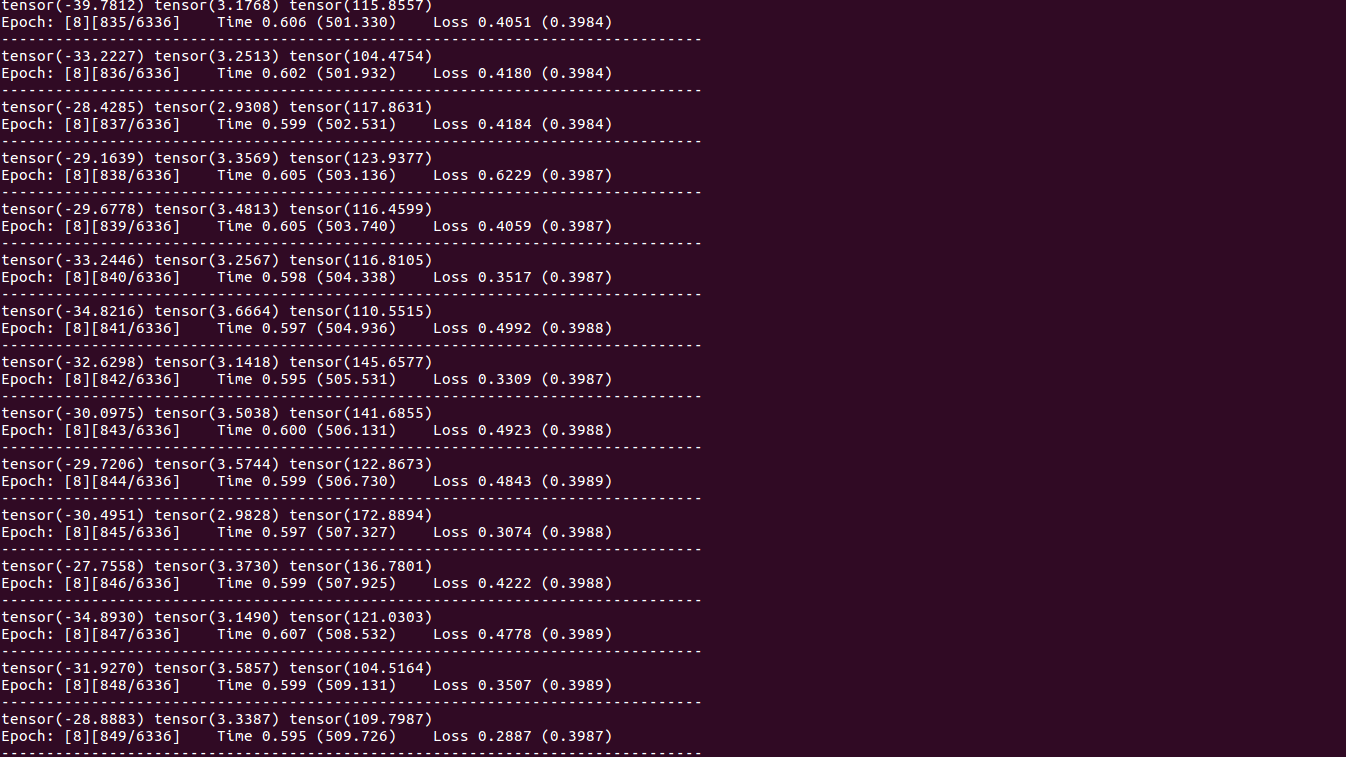

I trained the network with different initial weights, and got the following results, in which the three numbers are the min, mean and max of prediction:

Maybe there should be additional constraints on the prediction to restrict the output range.

Finally, I am looking forward to the scripts that are used to produce the data set.

from midas.

The predictions resemble the target inverse depths up to a scale and shift.

from midas.

As @dvdhfnr mentioned: This is by construction, as the value of the loss is independent of the output scale. Thus the ranges are dependent on the initialization. If you need a fixed scale range, you could try to add a (scaled) sigmoid to the output activations. However, this might change training behavior and you likely need a sensible initialization.

from midas.

To be more precise: "For all datasets, we shift and scale the ground-truth inverse depth to the range [0, 10]."

@dvdhfnr Hi, I found the normalization range is [0, 1] in original paper, so which range is correct?

thx

from midas.

For the latest version of the paper we used the range [0, 1] (https://arxiv.org/pdf/1907.01341v3.pdf, Page 7).

#2 (comment) was referring to an earlier version (https://arxiv.org/pdf/1907.01341v1.pdf, Page 6).

from midas.

@dvdhfnr Hi, did you use this loss function for MiDaS v3.0 as well?

from midas.

@dvdhfnr @ranftlr Hi,

I want to know how did you rescale the ground truths depth to range [0,1].

As my understanding, the following steps are done:

- Get the ground truth inverse depth

- Normalize like this inverse_depth_normalization = (inverse_depth- inverse_depth.min()) / (inverse_depth.max() - inverse_depth.min())

Does my understanding right? if not, could you please tell us how do you do "For all datasets, we shift and scale the ground-truth inverse depth to the range [0, 1]."?

Thx.

from midas.

@Twilight89

Same problem. I think the author will never reply. Do you have any conclusion? Or do you implement the training process?

from midas.

Related Issues (20)

- module 'cv2.cv2' has no attribute 'COLORMAP_INFERNO'

- What is the loss function?

- Copy of MiDaS model made with `copy.deepcopy` does not work. HOT 1

- swin2_tiny failed to run forward(): RuntimeError: unflatten: Provided sizes [64, 64] don't multiply up to the size of dim 2 (64) in the input tensor. HOT 1

- [question] Any suggestions on normalizing the outputs better? HOT 9

- Error Loading Pre-trained Weights: Size Mismatch in DPTDepthModel when trying to run for first time. HOT 7

- System crash when loading DPT_Hybrid

- PyTorch Pipeline Broken HOT 2

- gibberish output? HOT 4

- DPT 3.1 models are now available in the Transformers library HOT 1

- Question about COCO dataset HOT 1

- DEPTH VALUE OF THE EACH PIXEL HOT 12

- iOS Demo app is slowing down over time, and the first inference seems much slower HOT 2

- Converted MiDaS 2.1 TFLite model get wrong result on Mobile HOT 5

- MiDaS 2.1 TFLite fp16 with Core ML Delegate gets wrong results

- Cant find pretrained model HOT 1

- Imp. (Improvement) description in the documents

- Exact distance of image HOT 7

- Can't see output...

- New ‘ModuleNotFoundError’ HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from midas.