Comments (23)

download quantized model from HF hub is a feature that I plan to add in v0.2.0, I'm now writing the features plan of v0.2.0 and v0.3.0, anyone interested can see in Projects later.

from autogptq.

Try this:

! BUILD_CUDA_EXT=0 pip install auto-gptq

from autogptq.

@TheBloke That's been my concern from the start I was trying versions of Alpaca, GPT-J, Bloom, OPT, Pegasus but was not able to load them from huggingface.

from autogptq.

Sure I have used bitsandbytes and accelerate and have experimented with Alpaca, OPT, GPT-J using their 8bit versions

from autogptq.

Preparing metadata (setup.py) ... done

Requirement already satisfied: accelerate>=0.18.0 in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (0.18.0)

Requirement already satisfied: datasets in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (2.1.0)

Requirement already satisfied: numpy in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (1.23.5)

Requirement already satisfied: rouge in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (1.0.1)

Requirement already satisfied: torch>=1.13.0 in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (2.0.0)

Requirement already satisfied: safetensors in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (0.3.1)

Requirement already satisfied: transformers>=4.26.1 in /opt/conda/lib/python3.10/site-packages (from auto-gptq==0.1.0.dev0) (4.28.1)

Requirement already satisfied: psutil in /opt/conda/lib/python3.10/site-packages (from accelerate>=0.18.0->auto-gptq==0.1.0.dev0) (5.9.4)

Requirement already satisfied: packaging>=20.0 in /opt/conda/lib/python3.10/site-packages (from accelerate>=0.18.0->auto-gptq==0.1.0.dev0) (21.3)

Requirement already satisfied: pyyaml in /opt/conda/lib/python3.10/site-packages (from accelerate>=0.18.0->auto-gptq==0.1.0.dev0) (6.0)

Requirement already satisfied: sympy in /opt/conda/lib/python3.10/site-packages (from torch>=1.13.0->auto-gptq==0.1.0.dev0) (1.11.1)

Requirement already satisfied: jinja2 in /opt/conda/lib/python3.10/site-packages (from torch>=1.13.0->auto-gptq==0.1.0.dev0) (3.1.2)

Requirement already satisfied: networkx in /opt/conda/lib/python3.10/site-packages (from torch>=1.13.0->auto-gptq==0.1.0.dev0) (3.1)

Requirement already satisfied: typing-extensions in /opt/conda/lib/python3.10/site-packages (from torch>=1.13.0->auto-gptq==0.1.0.dev0) (4.5.0)

Requirement already satisfied: filelock in /opt/conda/lib/python3.10/site-packages (from torch>=1.13.0->auto-gptq==0.1.0.dev0) (3.11.0)

Requirement already satisfied: tokenizers!=0.11.3,<0.14,>=0.11.1 in /opt/conda/lib/python3.10/site-packages (from transformers>=4.26.1->auto-gptq==0.1.0.dev0) (0.13.3)

Requirement already satisfied: huggingface-hub<1.0,>=0.11.0 in /opt/conda/lib/python3.10/site-packages (from transformers>=4.26.1->auto-gptq==0.1.0.dev0) (0.13.4)

Requirement already satisfied: regex!=2019.12.17 in /opt/conda/lib/python3.10/site-packages (from transformers>=4.26.1->auto-gptq==0.1.0.dev0) (2023.3.23)

Requirement already satisfied: requests in /opt/conda/lib/python3.10/site-packages (from transformers>=4.26.1->auto-gptq==0.1.0.dev0) (2.28.2)

Requirement already satisfied: tqdm>=4.27 in /opt/conda/lib/python3.10/site-packages (from transformers>=4.26.1->auto-gptq==0.1.0.dev0) (4.64.1)

Requirement already satisfied: fsspec[http]>=2021.05.0 in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (2023.4.0)

Requirement already satisfied: pyarrow>=5.0.0 in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (10.0.1)

Requirement already satisfied: dill in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (0.3.6)

Requirement already satisfied: xxhash in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (3.2.0)

Requirement already satisfied: pandas in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (1.5.3)

Requirement already satisfied: responses<0.19 in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (0.18.0)

Requirement already satisfied: multiprocess in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (0.70.14)

Requirement already satisfied: aiohttp in /opt/conda/lib/python3.10/site-packages (from datasets->auto-gptq==0.1.0.dev0) (3.8.4)

Requirement already satisfied: six in /opt/conda/lib/python3.10/site-packages (from rouge->auto-gptq==0.1.0.dev0) (1.16.0)

Requirement already satisfied: charset-normalizer<4.0,>=2.0 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (2.1.1)

Requirement already satisfied: multidict<7.0,>=4.5 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (6.0.4)

Requirement already satisfied: yarl<2.0,>=1.0 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (1.8.2)

Requirement already satisfied: async-timeout<5.0,>=4.0.0a3 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (4.0.2)

Requirement already satisfied: frozenlist>=1.1.1 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (1.3.3)

Requirement already satisfied: attrs>=17.3.0 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (22.2.0)

Requirement already satisfied: aiosignal>=1.1.2 in /opt/conda/lib/python3.10/site-packages (from aiohttp->datasets->auto-gptq==0.1.0.dev0) (1.3.1)

Requirement already satisfied: pyparsing!=3.0.5,>=2.0.2 in /opt/conda/lib/python3.10/site-packages (from packaging>=20.0->accelerate>=0.18.0->auto-gptq==0.1.0.dev0) (3.0.9)

Requirement already satisfied: certifi>=2017.4.17 in /opt/conda/lib/python3.10/site-packages (from requests->transformers>=4.26.1->auto-gptq==0.1.0.dev0) (2022.12.7)

Requirement already satisfied: idna<4,>=2.5 in /opt/conda/lib/python3.10/site-packages (from requests->transformers>=4.26.1->auto-gptq==0.1.0.dev0) (3.4)

Requirement already satisfied: urllib3<1.27,>=1.21.1 in /opt/conda/lib/python3.10/site-packages (from requests->transformers>=4.26.1->auto-gptq==0.1.0.dev0) (1.26.15)

Requirement already satisfied: MarkupSafe>=2.0 in /opt/conda/lib/python3.10/site-packages (from jinja2->torch>=1.13.0->auto-gptq==0.1.0.dev0) (2.1.2)

Requirement already satisfied: python-dateutil>=2.8.1 in /opt/conda/lib/python3.10/site-packages (from pandas->datasets->auto-gptq==0.1.0.dev0) (2.8.2)

Requirement already satisfied: pytz>=2020.1 in /opt/conda/lib/python3.10/site-packages (from pandas->datasets->auto-gptq==0.1.0.dev0) (2023.3)

Requirement already satisfied: mpmath>=0.19 in /opt/conda/lib/python3.10/site-packages (from sympy->torch>=1.13.0->auto-gptq==0.1.0.dev0) (1.3.0)

Building wheels for collected packages: auto-gptq

Building wheel for auto-gptq (setup.py) ... error

error: subprocess-exited-with-error

× python setup.py bdist_wheel did not run successfully.

│ exit code: 1

╰─> [45 lines of output]

/opt/conda/lib/python3.10/site-packages/setuptools/dist.py:493: UserWarning: Normalizing 'v0.1.0-dev' to '0.1.0.dev0'

warnings.warn(tmpl.format(**locals()))

running bdist_wheel

running build

running build_py

running build_ext

Traceback (most recent call last):

File "<string>", line 2, in <module>

File "<pip-setuptools-caller>", line 34, in <module>

File "/kaggle/working/AutoGPTQ/setup.py", line 49, in <module>

setup(

File "/opt/conda/lib/python3.10/site-packages/setuptools/__init__.py", line 153, in setup

return distutils.core.setup(**attrs)

File "/opt/conda/lib/python3.10/distutils/core.py", line 148, in setup

dist.run_commands()

File "/opt/conda/lib/python3.10/distutils/dist.py", line 966, in run_commands

self.run_command(cmd)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/site-packages/wheel/bdist_wheel.py", line 343, in run

self.run_command("build")

File "/opt/conda/lib/python3.10/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/distutils/command/build.py", line 135, in run

self.run_command(cmd_name)

File "/opt/conda/lib/python3.10/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/site-packages/setuptools/command/build_ext.py", line 79, in run

_build_ext.run(self)

File "/opt/conda/lib/python3.10/site-packages/Cython/Distutils/old_build_ext.py", line 186, in run

_build_ext.build_ext.run(self)

File "/opt/conda/lib/python3.10/distutils/command/build_ext.py", line 340, in run

self.build_extensions()

File "/opt/conda/lib/python3.10/site-packages/torch/utils/cpp_extension.py", line 499, in build_extensions

_check_cuda_version(compiler_name, compiler_version)

File "/opt/conda/lib/python3.10/site-packages/torch/utils/cpp_extension.py", line 387, in _check_cuda_version

raise RuntimeError(CUDA_MISMATCH_MESSAGE.format(cuda_str_version, torch.version.cuda))

RuntimeError:

The detected CUDA version (12.1) mismatches the version that was used to compile

PyTorch (11.3). Please make sure to use the same CUDA versions.

[end of output]

note: This error originates from a subprocess, and is likely not a problem with pip.

ERROR: Failed building wheel for auto-gptq

Running setup.py clean for auto-gptq

Failed to build auto-gptq

Installing collected packages: auto-gptq

Running setup.py install for auto-gptq ... error

error: subprocess-exited-with-error

× Running setup.py install for auto-gptq did not run successfully.

│ exit code: 1

╰─> [90 lines of output]

/opt/conda/lib/python3.10/site-packages/setuptools/dist.py:493: UserWarning: Normalizing 'v0.1.0-dev' to '0.1.0.dev0'

warnings.warn(tmpl.format(**locals()))

running install

/opt/conda/lib/python3.10/site-packages/setuptools/command/install.py:34: SetuptoolsDeprecationWarning: setup.py install is deprecated. Use build and pip and other standards-based tools.

warnings.warn(

running build

running build_py

creating build

creating build/lib.linux-x86_64-3.10

creating build/lib.linux-x86_64-3.10/auto_gptq

copying auto_gptq/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq

creating build/lib.linux-x86_64-3.10/auto_gptq/nn_modules

copying auto_gptq/nn_modules/qlinear.py -> build/lib.linux-x86_64-3.10/auto_gptq/nn_modules

copying auto_gptq/nn_modules/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/nn_modules

copying auto_gptq/nn_modules/qlinear_triton.py -> build/lib.linux-x86_64-3.10/auto_gptq/nn_modules

creating build/lib.linux-x86_64-3.10/auto_gptq/utils

copying auto_gptq/utils/data_utils.py -> build/lib.linux-x86_64-3.10/auto_gptq/utils

copying auto_gptq/utils/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/utils

creating build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

copying auto_gptq/eval_tasks/_base.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

copying auto_gptq/eval_tasks/text_summarization_task.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

copying auto_gptq/eval_tasks/sequence_classification_task.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

copying auto_gptq/eval_tasks/language_modeling_task.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

copying auto_gptq/eval_tasks/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks

creating build/lib.linux-x86_64-3.10/auto_gptq/quantization

copying auto_gptq/quantization/gptq.py -> build/lib.linux-x86_64-3.10/auto_gptq/quantization

copying auto_gptq/quantization/quantizer.py -> build/lib.linux-x86_64-3.10/auto_gptq/quantization

copying auto_gptq/quantization/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/quantization

creating build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/moss.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/auto.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/_base.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/gptj.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/_utils.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/gpt2.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/_const.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/opt.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/gpt_neox.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/bloom.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

copying auto_gptq/modeling/llama.py -> build/lib.linux-x86_64-3.10/auto_gptq/modeling

creating build/lib.linux-x86_64-3.10/auto_gptq/nn_modules/triton_utils

copying auto_gptq/nn_modules/triton_utils/custom_autotune.py -> build/lib.linux-x86_64-3.10/auto_gptq/nn_modules/triton_utils

copying auto_gptq/nn_modules/triton_utils/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/nn_modules/triton_utils

creating build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks/_utils

copying auto_gptq/eval_tasks/_utils/__init__.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks/_utils

copying auto_gptq/eval_tasks/_utils/generation_utils.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks/_utils

copying auto_gptq/eval_tasks/_utils/classification_utils.py -> build/lib.linux-x86_64-3.10/auto_gptq/eval_tasks/_utils

running build_ext

Traceback (most recent call last):

File "<string>", line 2, in <module>

File "<pip-setuptools-caller>", line 34, in <module>

File "/kaggle/working/AutoGPTQ/setup.py", line 49, in <module>

setup(

File "/opt/conda/lib/python3.10/site-packages/setuptools/__init__.py", line 153, in setup

return distutils.core.setup(**attrs)

File "/opt/conda/lib/python3.10/distutils/core.py", line 148, in setup

dist.run_commands()

File "/opt/conda/lib/python3.10/distutils/dist.py", line 966, in run_commands

self.run_command(cmd)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/site-packages/setuptools/command/install.py", line 68, in run

return orig.install.run(self)

File "/opt/conda/lib/python3.10/distutils/command/install.py", line 568, in run

self.run_command('build')

File "/opt/conda/lib/python3.10/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/distutils/command/build.py", line 135, in run

self.run_command(cmd_name)

File "/opt/conda/lib/python3.10/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/opt/conda/lib/python3.10/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/opt/conda/lib/python3.10/site-packages/setuptools/command/build_ext.py", line 79, in run

_build_ext.run(self)

File "/opt/conda/lib/python3.10/site-packages/Cython/Distutils/old_build_ext.py", line 186, in run

_build_ext.build_ext.run(self)

File "/opt/conda/lib/python3.10/distutils/command/build_ext.py", line 340, in run

self.build_extensions()

File "/opt/conda/lib/python3.10/site-packages/torch/utils/cpp_extension.py", line 499, in build_extensions

_check_cuda_version(compiler_name, compiler_version)

File "/opt/conda/lib/python3.10/site-packages/torch/utils/cpp_extension.py", line 387, in _check_cuda_version

raise RuntimeError(CUDA_MISMATCH_MESSAGE.format(cuda_str_version, torch.version.cuda))

RuntimeError:

The detected CUDA version (12.1) mismatches the version that was used to compile

PyTorch (11.3). Please make sure to use the same CUDA versions.

[end of output]

note: This error originates from a subprocess, and is likely not a problem with pip.

error: legacy-install-failure

× Encountered error while trying to install package.

╰─> auto-gptq

note: This is an issue with the package mentioned above, not pip.

hint: See above for output from the failur

I tried that still ran into the same issue.

from autogptq.

Are you sure you ran it exactly like I showed?

! BUILD_CUDA_EXT=0 pip install auto-gptq

This is wrong syntax:

BUILD_CUDA_EXT=0

!pip install auto-gptq

Please show the output of running:

! BUILD_CUDA_EXT=0 pip install -v auto-gptq

from autogptq.

Sure I tried that and it worked.

While trying the demo:

from transformers import AutoTokenizer, TextGenerationPipeline

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

pretrained_model_dir = "facebook/opt-125m"

quantized_model_dir = "opt-125m-4bit"

tokenizer = AutoTokenizer.from_pretrained(pretrained_model_dir, use_fast=True)

examples = [

tokenizer(

"auto-gptq is an easy-to-use model quantization library with user-friendly apis, based on GPTQ algorithm."

)

]

quantize_config = BaseQuantizeConfig(

bits=4, # quantize model to 4-bit

group_size=128, # it is recommended to set the value to 128

)

# load un-quantized model, the model will always be force loaded into cpu

model = AutoGPTQForCausalLM.from_pretrained(pretrained_model_dir, quantize_config)

# quantize model, the examples should be list of dict whose keys can only be "input_ids" and "attention_mask"

# with value under torch.LongTensor type.

model.quantize(examples, use_triton=False)

# save quantized model

model.save_quantized(quantized_model_dir)

# save quantized model using safetensors

model.save_quantized(quantized_model_dir, use_safetensors=True)

# load quantized model, currently only support cpu or single gpu

model = AutoGPTQForCausalLM.from_quantized(quantized_model_dir, device="cuda:0", use_triton=False)

# inference with model.generate

print(tokenizer.decode(model.generate(**tokenizer("auto_gptq is", return_tensors="pt").to("cuda:0"))[0]))

# or you can also use pipeline

pipeline = TextGenerationPipeline(model=model, tokenizer=tokenizer)

print(pipeline("auto-gptq is")[0]["generated_text"])

────────────────────────────── Traceback (most recent call last) ────────────────────────────────╮

│ /tmp/ipykernel_32/3071398276.py:38 in <module> │

│ │

│ [Errno 2] No such file or directory: '/tmp/ipykernel_32/3071398276.py' │

│ │

│ /kaggle/working/AutoGPTQ/auto_gptq/modeling/_base.py:371 in generate │

│ │

│ 368 │ def generate(self, **kwargs): │

│ 369 │ │ """shortcut for model.generate""" │

│ 370 │ │ with torch.inference_mode(), torch.amp.autocast(device_type=self.device.type): │

│ ❱ 371 │ │ │ return self.model.generate(**kwargs) │

│ 372 │ │

│ 373 │ def prepare_inputs_for_generation(self, *args, **kwargs): │

│ 374 │ │ """shortcut for model.prepare_inputs_for_generation""" │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/utils/_contextlib.py:115 in decorate_context │

│ │

│ 112 │ @functools.wraps(func) │

│ 113 │ def decorate_context(*args, **kwargs): │

│ 114 │ │ with ctx_factory(): │

│ ❱ 115 │ │ │ return func(*args, **kwargs) │

│ 116 │ │

│ 117 │ return decorate_context │

│ 118 │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/generation/utils.py:1437 in generate │

│ │

│ 1434 │ │ │ │ ) │

│ 1435 │ │ │ │

│ 1436 │ │ │ # 11. run greedy search │

│ ❱ 1437 │ │ │ return self.greedy_search( │

│ 1438 │ │ │ │ input_ids, │

│ 1439 │ │ │ │ logits_processor=logits_processor, │

│ 1440 │ │ │ │ stopping_criteria=stopping_criteria, │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/generation/utils.py:2248 in greedy_search │

│ │

│ 2245 │ │ │ model_inputs = self.prepare_inputs_for_generation(input_ids, **model_kwargs) │

│ 2246 │ │ │ │

│ 2247 │ │ │ # forward pass to get next token │

│ ❱ 2248 │ │ │ outputs = self( │

│ 2249 │ │ │ │ **model_inputs, │

│ 2250 │ │ │ │ return_dict=True, │

│ 2251 │ │ │ │ output_attentions=output_attentions, │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py:1501 in _call_impl │

│ │

│ 1498 │ │ if not (self._backward_hooks or self._backward_pre_hooks or self._forward_hooks │

│ 1499 │ │ │ │ or _global_backward_pre_hooks or _global_backward_hooks │

│ 1500 │ │ │ │ or _global_forward_hooks or _global_forward_pre_hooks): │

│ ❱ 1501 │ │ │ return forward_call(*args, **kwargs) │

│ 1502 │ │ # Do not call functions when jit is used │

│ 1503 │ │ full_backward_hooks, non_full_backward_hooks = [], [] │

│ 1504 │ │ backward_pre_hooks = [] │

│ │

│ /opt/conda/lib/python3.10/site-packages/accelerate/hooks.py:165 in new_forward │

│ │

│ 162 │ │ │ with torch.no_grad(): │

│ 163 │ │ │ │ output = old_forward(*args, **kwargs) │

│ 164 │ │ else: │

│ ❱ 165 │ │ │ output = old_forward(*args, **kwargs) │

│ 166 │ │ return module._hf_hook.post_forward(module, output) │

│ 167 │ │

│ 168 │ module.forward = new_forward │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/opt/modeling_opt.py:938 in forward │

│ │

│ 935 │ │ return_dict = return_dict if return_dict is not None else self.config.use_return │

│ 936 │ │ │

│ 937 │ │ # decoder outputs consists of (dec_features, layer_state, dec_hidden, dec_attn) │

│ ❱ 938 │ │ outputs = self.model.decoder( │

│ 939 │ │ │ input_ids=input_ids, │

│ 940 │ │ │ attention_mask=attention_mask, │

│ 941 │ │ │ head_mask=head_mask, │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py:1501 in _call_impl │

│ │

│ 1498 │ │ if not (self._backward_hooks or self._backward_pre_hooks or self._forward_hooks │

│ 1499 │ │ │ │ or _global_backward_pre_hooks or _global_backward_hooks │

│ 1500 │ │ │ │ or _global_forward_hooks or _global_forward_pre_hooks): │

│ ❱ 1501 │ │ │ return forward_call(*args, **kwargs) │

│ 1502 │ │ # Do not call functions when jit is used │

│ 1503 │ │ full_backward_hooks, non_full_backward_hooks = [], [] │

│ 1504 │ │ backward_pre_hooks = [] │

│ │

│ /opt/conda/lib/python3.10/site-packages/accelerate/hooks.py:165 in new_forward │

│ │

│ 162 │ │ │ with torch.no_grad(): │

│ 163 │ │ │ │ output = old_forward(*args, **kwargs) │

│ 164 │ │ else: │

│ ❱ 165 │ │ │ output = old_forward(*args, **kwargs) │

│ 166 │ │ return module._hf_hook.post_forward(module, output) │

│ 167 │ │

│ 168 │ module.forward = new_forward │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/opt/modeling_opt.py:704 in forward │

│ │

│ 701 │ │ │ │ │ None, │

│ 702 │ │ │ │ ) │

│ 703 │ │ │ else: │

│ ❱ 704 │ │ │ │ layer_outputs = decoder_layer( │

│ 705 │ │ │ │ │ hidden_states, │

│ 706 │ │ │ │ │ attention_mask=causal_attention_mask, │

│ 707 │ │ │ │ │ layer_head_mask=(head_mask[idx] if head_mask is not None else None), │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py:1501 in _call_impl │

│ │

│ 1498 │ │ if not (self._backward_hooks or self._backward_pre_hooks or self._forward_hooks │

│ 1499 │ │ │ │ or _global_backward_pre_hooks or _global_backward_hooks │

│ 1500 │ │ │ │ or _global_forward_hooks or _global_forward_pre_hooks): │

│ ❱ 1501 │ │ │ return forward_call(*args, **kwargs) │

│ 1502 │ │ # Do not call functions when jit is used │

│ 1503 │ │ full_backward_hooks, non_full_backward_hooks = [], [] │

│ 1504 │ │ backward_pre_hooks = [] │

│ │

│ /opt/conda/lib/python3.10/site-packages/accelerate/hooks.py:165 in new_forward │

│ │

│ 162 │ │ │ with torch.no_grad(): │

│ 163 │ │ │ │ output = old_forward(*args, **kwargs) │

│ 164 │ │ else: │

│ ❱ 165 │ │ │ output = old_forward(*args, **kwargs) │

│ 166 │ │ return module._hf_hook.post_forward(module, output) │

│ 167 │ │

│ 168 │ module.forward = new_forward │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/opt/modeling_opt.py:329 in forward │

│ │

│ 326 │ │ │ hidden_states = self.self_attn_layer_norm(hidden_states) │

│ 327 │ │ │

│ 328 │ │ # Self Attention │

│ ❱ 329 │ │ hidden_states, self_attn_weights, present_key_value = self.self_attn( │

│ 330 │ │ │ hidden_states=hidden_states, │

│ 331 │ │ │ past_key_value=past_key_value, │

│ 332 │ │ │ attention_mask=attention_mask, │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py:1501 in _call_impl │

│ │

│ 1498 │ │ if not (self._backward_hooks or self._backward_pre_hooks or self._forward_hooks │

│ 1499 │ │ │ │ or _global_backward_pre_hooks or _global_backward_hooks │

│ 1500 │ │ │ │ or _global_forward_hooks or _global_forward_pre_hooks): │

│ ❱ 1501 │ │ │ return forward_call(*args, **kwargs) │

│ 1502 │ │ # Do not call functions when jit is used │

│ 1503 │ │ full_backward_hooks, non_full_backward_hooks = [], [] │

│ 1504 │ │ backward_pre_hooks = [] │

│ │

│ /opt/conda/lib/python3.10/site-packages/accelerate/hooks.py:165 in new_forward │

│ │

│ 162 │ │ │ with torch.no_grad(): │

│ 163 │ │ │ │ output = old_forward(*args, **kwargs) │

│ 164 │ │ else: │

│ ❱ 165 │ │ │ output = old_forward(*args, **kwargs) │

│ 166 │ │ return module._hf_hook.post_forward(module, output) │

│ 167 │ │

│ 168 │ module.forward = new_forward │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/opt/modeling_opt.py:174 in forward │

│ │

│ 171 │ │ bsz, tgt_len, _ = hidden_states.size() │

│ 172 │ │ │

│ 173 │ │ # get query proj │

│ ❱ 174 │ │ query_states = self.q_proj(hidden_states) * self.scaling │

│ 175 │ │ # get key, value proj │

│ 176 │ │ if is_cross_attention and past_key_value is not None: │

│ 177 │ │ │ # reuse k,v, cross_attentions │

│ │

│ /opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py:1501 in _call_impl │

│ │

│ 1498 │ │ if not (self._backward_hooks or self._backward_pre_hooks or self._forward_hooks │

│ 1499 │ │ │ │ or _global_backward_pre_hooks or _global_backward_hooks │

│ 1500 │ │ │ │ or _global_forward_hooks or _global_forward_pre_hooks): │

│ ❱ 1501 │ │ │ return forward_call(*args, **kwargs) │

│ 1502 │ │ # Do not call functions when jit is used │

│ 1503 │ │ full_backward_hooks, non_full_backward_hooks = [], [] │

│ 1504 │ │ backward_pre_hooks = [] │

│ │

│ /opt/conda/lib/python3.10/site-packages/accelerate/hooks.py:165 in new_forward │

│ │

│ 162 │ │ │ with torch.no_grad(): │

│ 163 │ │ │ │ output = old_forward(*args, **kwargs) │

│ 164 │ │ else: │

│ ❱ 165 │ │ │ output = old_forward(*args, **kwargs) │

│ 166 │ │ return module._hf_hook.post_forward(module, output) │

│ 167 │ │

│ 168 │ module.forward = new_forward │

│ │

│ /kaggle/working/AutoGPTQ/auto_gptq/nn_modules/qlinear.py:189 in forward │

│ │

│ 186 │ │ │ elif self.bits == 3: │

│ 187 │ │ │ │ quant_cuda.vecquant3matmul(x.float(), self.qweight, out, self.scales.flo │

│ 188 │ │ │ elif self.bits == 4: │

│ ❱ 189 │ │ │ │ quant_cuda.vecquant4matmul(x.float(), self.qweight, out, self.scales.flo │

│ 190 │ │ │ elif self.bits == 8: │

│ 191 │ │ │ │ quant_cuda.vecquant8matmul(x.float(), self.qweight, out, self.scales.flo │

│ 192 │ │ │ else: │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

AttributeError: module 'quant_cuda' has no attribute 'vecquant4matmul'

The bug seems to be referenced here

from autogptq.

I think the reason for the error is that you have another version of quant-cuda already installed, likely from GPTQ-for-LLaMa. You should first do: pip uninstall quant-cuda.

However you can't use CUDA AutoGPTQ anyway. You just told auto-gptq to install without CUDA (BUILD_CUDA_EXT=0), and you need to do that because your CUDA Toolkit version doesn't match the version used to compiled pytorch.

If you want to fix that you could uninstall CUDA Toolkit 12.1 and install CUDA Toolkit 11.8 instead. Or you could build pytorch from source, so it can use CUDA Toolkit 12.1. There's no pre-built pytorch binaries for CUDA 12.x yet. Instructions for doing that are on the pytorch Github - it takes a while though, at least an hour to build.

Or forget about CUDA and use Triton instead:

Here is simple example code to use Triton to load this GPTQ model: https://huggingface.co/TheBloke/stable-vicuna-13B-GPTQ

from transformers import AutoTokenizer, pipeline, logging

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

quantized_model_dir = "/path/to/stable-vicuna-13B-GPTQ"

tokenizer = AutoTokenizer.from_pretrained(quantized_model_dir, use_fast=True)

def get_config(has_desc_act):

return BaseQuantizeConfig(

bits=4, # quantize model to 4-bit

group_size=128, # it is recommended to set the value to 128

desc_act=has_desc_act

)

def get_model(model_base, triton, model_has_desc_act):

return AutoGPTQForCausalLM.from_quantized(quantized_model_dir, use_safetensors=True, model_basename=model_base, device="cuda:0", use_triton=triton, quantize_config=get_config(model_has_desc_act))

# Prevent printing spurious transformers error

logging.set_verbosity(logging.CRITICAL)

prompt='''### Human: Write a story about llamas

### Assistant:'''

model = get_model("stable-vicuna-13B-GPTQ-4bit.compat.no-act-order", triton=True, model_has_desc_act=False)

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_length=512,

temperature=0.7,

top_p=0.95,

repetition_penalty=1.15

)

print("### Inference:")

print(pipe(prompt)[0]['generated_text'])from autogptq.

It seems the repo path is wrong:

╭─────────────────────────────── Traceback (most recent call last) ────────────────────────────────╮

│ /tmp/ipykernel_32/3845330370.py:6 in <module> │

│ │

│ [Errno 2] No such file or directory: '/tmp/ipykernel_32/3845330370.py' │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/auto/tokenization_auto.py:642 in │

│ from_pretrained │

│ │

│ 639 │ │ │ return tokenizer_class.from_pretrained(pretrained_model_name_or_path, *input │

│ 640 │ │ │

│ 641 │ │ # Next, let's try to use the tokenizer_config file to get the tokenizer class. │

│ ❱ 642 │ │ tokenizer_config = get_tokenizer_config(pretrained_model_name_or_path, **kwargs) │

│ 643 │ │ if "_commit_hash" in tokenizer_config: │

│ 644 │ │ │ kwargs["_commit_hash"] = tokenizer_config["_commit_hash"] │

│ 645 │ │ config_tokenizer_class = tokenizer_config.get("tokenizer_class") │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/models/auto/tokenization_auto.py:486 in │

│ get_tokenizer_config │

│ │

│ 483 │ tokenizer_config = get_tokenizer_config("tokenizer-test") │

│ 484 │ ```""" │

│ 485 │ commit_hash = kwargs.get("_commit_hash", None) │

│ ❱ 486 │ resolved_config_file = cached_file( │

│ 487 │ │ pretrained_model_name_or_path, │

│ 488 │ │ TOKENIZER_CONFIG_FILE, │

│ 489 │ │ cache_dir=cache_dir, │

│ │

│ /opt/conda/lib/python3.10/site-packages/transformers/utils/hub.py:409 in cached_file │

│ │

│ 406 │ user_agent = http_user_agent(user_agent) │

│ 407 │ try: │

│ 408 │ │ # Load from URL or cache if already cached │

│ ❱ 409 │ │ resolved_file = hf_hub_download( │

│ 410 │ │ │ path_or_repo_id, │

│ 411 │ │ │ filename, │

│ 412 │ │ │ subfolder=None if len(subfolder) == 0 else subfolder, │

│ │

│ /opt/conda/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py:112 in _inner_fn │

│ │

│ 109 │ │ │ kwargs.items(), # Kwargs values │

│ 110 │ │ ): │

│ 111 │ │ │ if arg_name in ["repo_id", "from_id", "to_id"]: │

│ ❱ 112 │ │ │ │ validate_repo_id(arg_value) │

│ 113 │ │ │ │

│ 114 │ │ │ elif arg_name == "token" and arg_value is not None: │

│ 115 │ │ │ │ has_token = True │

│ │

│ /opt/conda/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py:160 in │

│ validate_repo_id │

│ │

│ 157 │ │ raise HFValidationError(f"Repo id must be a string, not {type(repo_id)}: '{repo_ │

│ 158 │ │

│ 159 │ if repo_id.count("/") > 1: │

│ ❱ 160 │ │ raise HFValidationError( │

│ 161 │ │ │ "Repo id must be in the form 'repo_name' or 'namespace/repo_name':" │

│ 162 │ │ │ f" '{repo_id}'. Use `repo_type` argument if needed." │

│ 163 │ │ ) │

╰──────────────────────────────────────────────────────────────────────────────────────────────────╯

HFValidationError: Repo id must be in the form 'repo_name' or 'namespace/repo_name':

'/path/to/stable-vicuna-13B-GPTQ'. Use `repo_type` argument if needed.

from autogptq.

My example code assumes the model is downloaded locally. Download the model then update quantized_model_dir to point to the folder where you downloaded it.

AutoGPTQ does not yet support loading a model directly from Hugging Face.

from autogptq.

@TheBloke Is there an open PR to do this?

from autogptq.

No, no-one is looking at it yet to my knowledge. Remember that AutoGPTQ is still new and under active development. Such improvements will come over time.

It's easy to download the model first. If you want a quick way to do that, clone text-generation-webui and call its download-model.py:

root@bce51ed9603e:/workspace# python /root/text-generation-webui/download-model.py -h

usage: download-model.py [-h] [--branch BRANCH] [--threads THREADS] [--text-only] [--output OUTPUT] [--clean] [--check] [MODEL]

positional arguments:

MODEL

options:

-h, --help show this help message and exit

--branch BRANCH Name of the Git branch to download from.

--threads THREADS Number of files to download simultaneously.

--text-only Only download text files (txt/json).

--output OUTPUT The folder where the model should be saved.

--clean Does not resume the previous download.

--check Validates the checksums of model files.

root@bce51ed9603e:/workspace# python /root/text-generation-webui/download-model.py TheBloke/stable-vicuna-13B-GPTQ

Downloading the model to models/TheBloke_stable-vicuna-13B-GPTQ

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 13.2k /13.2k 11.9MiB/s

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 21.0 /21.0 22.7kiB/s

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 587 /587 649kiB/s

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 137 /137 157kiB/s

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 81.0 /81.0 93.1kiB/s

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 96.0 /96.0 107kiB/s

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 7.26G /7.26G 36.8MiB/s

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1.84M /1.84M 3.27MiB/s

100%|███████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 500k /500k 1.97MiB/s

100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 715 /715 808kiB/s

root@bce51ed9603e:/workspace# ll models/TheBloke_stable-vicuna-13B-GPTQ/

total 7093305

drwxrwxrwx 2 root root 3000675 May 4 08:30 ./

drwxrwxrwx 7 root root 3002107 May 4 08:27 ../

-rw-rw-rw- 1 root root 13247 May 4 08:27 README.md

-rw-rw-rw- 1 root root 21 May 4 08:27 added_tokens.json

-rw-rw-rw- 1 root root 587 May 4 08:27 config.json

-rw-rw-rw- 1 root root 137 May 4 08:27 generation_config.json

-rw-rw-rw- 1 root root 333 May 4 08:27 huggingface-metadata.txt

-rw-rw-rw- 1 root root 81 May 4 08:27 quantize_config.json

-rw-rw-rw- 1 root root 96 May 4 08:27 special_tokens_map.json

-rw-rw-rw- 1 root root 7255179696 May 4 08:30 stable-vicuna-13B-GPTQ-4bit.compat.no-act-order.safetensors

-rw-rw-rw- 1 root root 1842847 May 4 08:30 tokenizer.json

-rw-rw-rw- 1 root root 499723 May 4 08:30 tokenizer.model

-rw-rw-rw- 1 root root 715 May 4 08:30 tokenizer_config.json

root@bce51ed9603e:/workspace#

In this example, using my example code you would then set

quantized_model_dir = "/workspace/models/TheBloke_stable-vicuna-13B-GPTQ"from autogptq.

@TheBloke Sure will try that. While loading TheBloke/vicuna-13B-1.1-GPTQ-4bit-128g from huggingface. It takes very long to load on a kaggle cpu. Is there any way around that?

from autogptq.

I don't think so, no. Are you trying to use the CPU only, or do you have a GPU for inference?

from autogptq.

@TheBloke I am trying using CPU only but even while GPU only it does not work. I think it requires 28GB of GPU RAM to load?

from autogptq.

CPU only will definitely be terribly slow.

For a 13B 4bit model like stable-vicuna-13B you need around 9GB VRAM. Nothing like 28GB - that would be for an unquantised fp16 model.

from autogptq.

nvidia-smi stats while running the example code I showed before on stable-vicuna-13B:

timestamp, name, utilization.gpu [%], utilization.memory [%], memory.total [MiB], memory.free [MiB], memory.used [MiB]

2023/05/04 08:41:04.156, NVIDIA RTX A4500, 20 %, 7 %, 20470 MiB, 11292 MiB, 8894 MiB

Last column is used VRAM

from autogptq.

I have a VRAM of 14GB. But it still is not able to run?

from autogptq.

Please show the full output of running the script

from autogptq.

After running the model I got this:

/opt/conda/lib/python3.10/site-packages/scipy/__init__.py:146: UserWarning: A NumPy version >=1.16.5 and <1.23.0 is required for this version of SciPy (detected version 1.23.5

warnings.warn(f"A NumPy version >={np_minversion} and <{np_maxversion}"

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

Cell In[1], line 5

1 from transformers import AutoTokenizer, AutoModelForCausalLM

3 tokenizer = AutoTokenizer.from_pretrained("TheBloke/vicuna-13B-1.1-GPTQ-4bit-128g")

----> 5 model = AutoModelForCausalLM.from_pretrained("TheBloke/vicuna-13B-1.1-GPTQ-4bit-128g")

File /opt/conda/lib/python3.10/site-packages/transformers/models/auto/auto_factory.py:471, in _BaseAutoModelClass.from_pretrained(cls, pretrained_model_name_or_path, *model_args, **kwargs)

469 elif type(config) in cls._model_mapping.keys():

470 model_class = _get_model_class(config, cls._model_mapping)

--> 471 return model_class.from_pretrained(

472 pretrained_model_name_or_path, *model_args, config=config, **hub_kwargs, **kwargs

473 )

474 raise ValueError(

475 f"Unrecognized configuration class {config.__class__} for this kind of AutoModel: {cls.__name__}.\n"

476 f"Model type should be one of {', '.join(c.__name__ for c in cls._model_mapping.keys())}."

477 )

File /opt/conda/lib/python3.10/site-packages/transformers/modeling_utils.py:2511, in PreTrainedModel.from_pretrained(cls, pretrained_model_name_or_path, *model_args, **kwargs)

2505 raise EnvironmentError(

2506 f"{pretrained_model_name_or_path} does not appear to have a file named"

2507 f" {_add_variant(WEIGHTS_NAME, variant)} but there is a file without the variant"

2508 f" {variant}. Use `variant=None` to load this model from those weights."

2509 )

2510 else:

-> 2511 raise EnvironmentError(

2512 f"{pretrained_model_name_or_path} does not appear to have a file named"

2513 f" {_add_variant(WEIGHTS_NAME, variant)}, {TF2_WEIGHTS_NAME}, {TF_WEIGHTS_NAME} or"

2514 f" {FLAX_WEIGHTS_NAME}."

2515 )

2516 except EnvironmentError:

2517 # Raise any environment error raise by `cached_file`. It will have a helpful error message adapted

2518 # to the original exception.

2519 raise

OSError: TheBloke/vicuna-13B-1.1-GPTQ-4bit-128g does not appear to have a file named pytorch_model.bin, tf_model.h5, model.ckpt or flax_model.msgpack.

from autogptq.

This is wrong:

model = AutoModelForCausalLM.from_pretrained("TheBloke/vicuna-13B-1.1-GPTQ-4bit-128g")

It should be AutoGPTQForCausalLM, and needs the other arguments I showed in my example code.

Please use the example code I provided as a base.

from autogptq.

@TheBloke I had taken it from the huggingface model card. Do all your models require AutoGPTQ or you have support for huggingface transformers ?

from autogptq.

@TheBloke I had taken it from the huggingface model card. Do all your models require AutoGPTQ or you have support for huggingface transformers ?

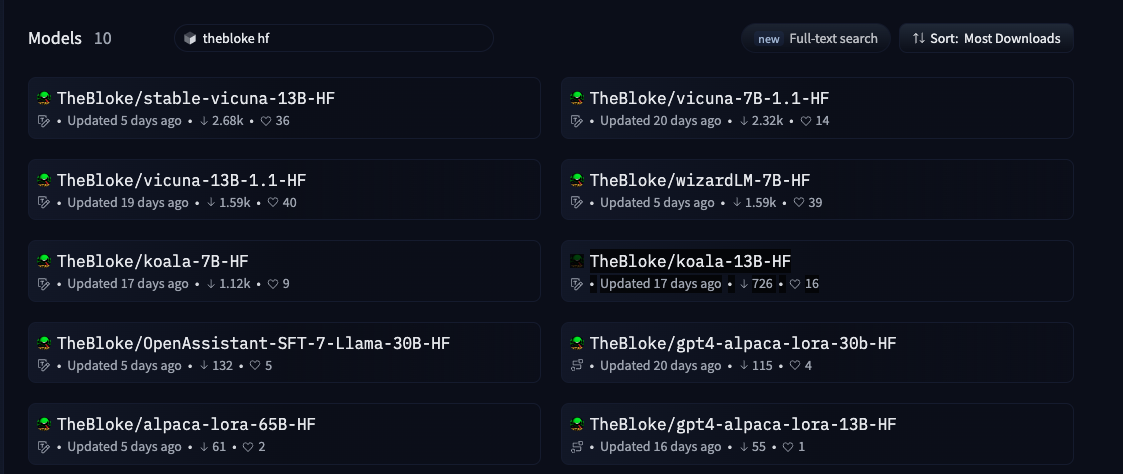

I have many models that support unquantised transformers inference. But they will need a lot more VRAM. I don't think you can load any of them in fp16 in 16GB, but you could load them in 8bit.

Here are my HF models: https://huggingface.co/models?search=thebloke%20hf

A 7B fp16 model may load in 16GB VRAM. A 13B fp16 definitely will not.

But either way I recommend you add load_in_8bit=Trueto the model = AutoModelForCausalLM() call, and then it will require half as much VRAM. This requires the bitsandbytes library: pip install bitsandbytes (you may have it installed already.)

If you are loading unquantised HF models then that is not relevant to AutoGPTQ. For further support with that, please open a Discussion on the HF model page for whichever of my models you try to use.

from autogptq.

Related Issues (20)

- [BUG] Can not save quantized model to disk: "you shouldn't move a model that is dispatched using accelerate hooks." HOT 1

- Error when trying to quantize the JAIS model. HOT 10

- [BUG] GPTQ Kernels dont work with PEFT

- Error when quantizing mixtral 8x7b model. "ZeroDivisionError: float division by zero " HOT 1

- TypeError: forward() missing 1 required positional argument: 'hidden_states'[BUG] ? HOT 3

- [BUG]GPTQ QWEN-72B-Chat HOT 4

- gptq 4bit avg loss is large HOT 3

- export mistral8x7b error

- [BUG] Llama 3 8B Instruct - `no_inject_fused_attention` must be true or else errors out HOT 8

- Why doesn't AutoGPTQ quantize lm_head layer? HOT 5

- What magnitude of avg loss indicates a relatively good result for a quantization model HOT 6

- Why LLaMA3-8B after GPTQ test in wikitext2 so bad? HOT 8

- [PR Ready for Review] [FEATURE] Extend Support for Phi-3

- [FEATURE] Backport vllm expanded Marlin kernel to autogptq. HOT 1

- [DEPRECATION] Discussion on Fused attention and QiGEN HOT 5

- Llama-3 8B Instruct quantized to 8 Bit spits out gibberish in transformers `model.generate()` but works fine in vLLM? HOT 5

- [BUG]safetensors_rust.SafetensorError: Error while deserializing header: MetadataIncompleteBuffer

- [Question] Differences in quantization logic compared to AWQ

- [FEATURE] ADD SUPPORT DeepSeek-V2

- [BUG] ARM installation error

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from autogptq.